Accelerate IT operations with AI-driven Automation

Automation in IT operations enable agility, resilience, and operational excellence, paving the way for organizations to adapt swiftly to changing environments, deliver superior services, and achieve sustainable success in today's dynamic digital landscape.

Driving Innovation with Next-gen Application Management

Next-generation application management fueled by AIOps is revolutionizing how organizations monitor performance, modernize applications, and manage the entire application lifecycle.

AI-powered Analytics: Transforming Data into Actionable Insights

AIOps and analytics foster a culture of continuous improvement by providing organizations with actionable intelligence to optimize workflows, enhance service quality, and align IT operations with business goals.

The vendors who win your migration bid aren’t always the ones best qualified to execute it. Here’s how to fix that.

A poorly constructed data migration request for proposal(RFP) is one of the most expensive documents your enterprise will never notice until the cutover window closes, your lead DBA (Database Administrator) has been awake for nineteen hours, and the error log is longer than the data migration plan.

And then the real picture emerges: forty thousand customer records are missing, the rollback procedure exists only on paper, the CIO wants answers nobody has yet, and the business day is close enough that every minute now costs something. None of this was in the vendor’s proposal.

This scenario has a name in most IT departments. They call it the post-cutover war room, and it fills up not because the technology broke down, but because the vendor was never asked the right questions before being handed over the contract.

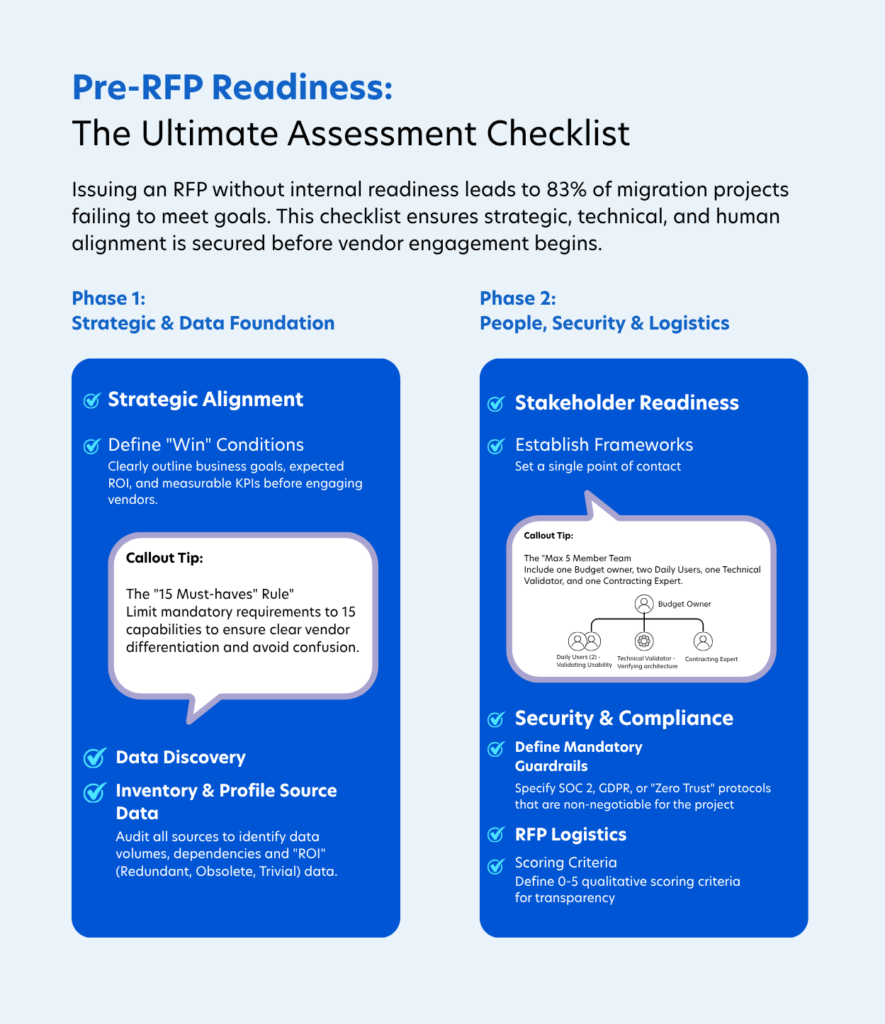

Most organizations approach the RFP as a procurement formality. The ones that resist this mindset are usually the ones that avoid last-minute fire drills and “war room” scenarios. What often gets overlooked is the need for a well-defined data migration strategy framework before any meaningful vendor conversation begins. And because of this loop, there’s no shared understanding of scope, acceptable risk levels, or what success actually looks like.

This guide walks you through exactly how to build an RFP that works, from governance structure and pre-migration auditing to cutover guarantees and scoring methodology.

What a Data Migration RFP Actually Is (And What It Isn't)

A Data Migration RFP is a formal document that solicits structured, comparable solutions from technology vendors for moving data between systems, formats, or storage environments.

It’s not a shopping list. It’s a technical stress test disguised as a business document.

The RFP becomes essential the moment the investment is large enough that a wrong vendor decision cannot be easily undone. To understand why the stakes compound so quickly at enterprise scale, see why data migration matters for modern infrastructure before scoping your first stakeholder conversation. Understanding the business case early determines how seriously your internal team will treat the discovery process.

More critically, the RFP forces your internal teams to define the full scope before vendor conversations begin. Without it, you’ll receive proposals built on wildly different assumptions, and you’ll have no clean baseline to compare them against.

Key Takeaway: An RFP isn’t just a procurement process. It’s your primary tool for surfacing vendor gaps before they become your operational gaps.

The RFP Steering Committee: Keep It Small, Keep It Accountable

The fastest way to collapse an RFP process is to over-democratize it. Decision-making authority should sit with a cross-functional team of approximately five people, each with a distinct role and clear accountability.

| Role | Responsibility |

|---|---|

| Project Sponsor | Owns the budget; provides executive cover when scope expands |

| Project Manager | Drives daily accountability and tracks vendor alignment to milestones |

| Technical Validator | Data architect or DBA who pressure-tests integration claims |

| Business Analyst | Understands what the data means and connects it to business outcomes |

| Procurement Lead | Manages legal, bidding process, and commercial negotiation |

If your steering committee has twelve members, your RFP has twelve opinions and no decision-maker. Keep it tight.

The Pre-Migration Audit: Where Most Enterprises Skip and Suffer

Post-mortems tell a version of this story constantly. A mid-market manufacturer hires a systems integrator to move 15 years of ERP data to a cloud-native platform. The integrator profiles the main transactional tables, builds a clean mapping document, and kicks off the migration.

Three weeks in, they discover a legacy pricing engine buried in a COBOL-adjacent script that feeds 22% of active customer contracts. It wasn’t in the scope. It wasn’t in the source documentation. It wasn’t found during profiling because nobody asked the right questions.

BCG estimates that 70% of digital transformation initiatives fail, often due to exactly this kind of misaligned scoping between the client environment and the vendor’s discovery process.

A proper pre-migration audit goes far deeper than listing databases.

What "Deep" Actually Means Here

It means profiling ERPs, CRMs, SaaS silos, and legacy repositories for:

- Volume and velocity: How much data, and how fast does it change?

- Interdependencies: What breaks if this table moves, and that one doesn’t?

- ROT Data (Redundant, Obsolete, or Trivial records) that inflate cloud storage costs and corrupt post-migration analytics

Migrating dirty data doesn’t just cost money for storage. It quietly poisons the analytics and AI workloads you’re building the new environment to support. Gartner puts the annual cost of poor data quality at $12.9 million a year on average.

That risk compounds fast at enterprise scale. To understand how unresolved data quality issues create downstream AI-readiness failures and inflate remediation costs, explore our guide on enterprise data migration risks, which details why discovery-phase gaps consistently become the most expensive line item on post-migration incident reports.

Addressing these issues before the RFP is issued is dramatically cheaper than remediating them in production. Use our data migration checklist for IT leaders to audit your environment before a single vendor conversation begins.

Key Takeaway: A migration is only as clean as its source audit. If your vendor’s discovery process doesn’t uncover ROT data and hidden interdependencies, you’re migrating problems, not just data.

Defining Your Migration Methodology Requirements

| Feature | Big Bang Migration | Incremental (Phased) Migration | Parallel Operations |

|---|---|---|---|

| Description | All data is moved in a single, high-stakes event within a defined timeframe. | Data is moved in manageable stages, "waves," or smaller segments over time. | Both the legacy and new systems run simultaneously for a period before final cutover. |

| Risk Level | High: A single error can cause a catastrophic project failure. | Medium/Low: Risk is isolated to specific segments or phases. | Very Low: Offers the lowest risk of business disruption. |

| Timeline | Shorter: Overall project duration is typically compressed. | Longer: Requires more time to plan and execute multiple stages. | Variable: Dependent on the duration of dual-running requirements. |

| Downtime | Significant: Requires a longer, continuous window of system unavailability. | Minimal: Downtime spread across shorter, planned windows. | Near Zero: Systems remain active while synchronization occurs. |

| Cost | Lower: Reduced operational overhead and shorter project duration. | Medium: Requires more planning resources and potentially temporary interfaces. | Highest: The most expensive approach due to dual-run infrastructure costs. |

| Key Advantages | Cleaner cutover with less user confusion; lower complexity in maintaining data synchronization. | Allows for iterative learning and course correction between phases; reduces overall pressure on teams. | Provides a constant fallback option at any point; allows for exhaustive validation of data parity. |

| Key Disadvantages | No opportunity to adjust the approach based on learnings; high pressure on final validation. | Increased complexity in maintaining parallel systems and managing data dependencies between waves. | High synchronization overhead; potential for user confusion regarding which system to use for specific tasks. |

Your RFP must explicitly mandate which migration approaches are acceptable and why. The right data migration RFP incremental migration strategies aren’t a preference. For complex enterprise environments, they’re a risk management decision.

The Three Primary Approaches

Big Bang Migration moves everything in a single event. Faster on paper, but it offers no room for error. If something goes wrong at hour 14 of a 16-hour cutover window, your recovery options are severely limited.

Incremental (Phased) Migration moves data in manageable stages, by business unit, data domain, or system tier. Each wave is tested before the next begins. This approach allows your team to learn from Wave 1 before committing Wave 5 to production.

Parallel Operations runs both source and target simultaneously for a defined period. It’s the lowest-risk option and provides a live fallback if the new system reveals unexpected behavior under real load.

For most enterprise migrations, the right answer is a hybrid: incremental by default, with parallel operations during the final cutover window. Understanding how to structure that hybrid approach is where most RFPs fall short. A well-defined data migration plan and methodology framework should specify not just which approach the vendor will use, but the criteria that trigger a switch between phases.

Why Change Data Capture (CDC) Is Non-Negotiable

Modern RFPs should require vendors to demonstrate hands-on experience with Change Data Capture (CDC), a technique that replicates data changes in real-time, keeping source and target environments synchronized throughout the migration.

CDC is what makes near-zero downtime cutovers technically achievable. Without it, you’re choosing between an extended blackout window and a risky snapshot-based migration where any transaction processed during the move is potentially lost.

Key Takeaway: Any vendor who defaults to Big Bang for complex enterprise migrations without a strong written justification is either cutting corners on planning or underestimating your environment.

The Best Questions for a Data Migration RFP Audit and Validation

A vendor’s proposal tells you what they want you to believe. The right questions, specifically those targeting audit depth and validation methodology, tell you what vendors actually know. These are the categories that matter most.

On Data Audit and Profiling

These questions expose whether a vendor’s discovery process is systematic or ad hoc:

- How does your team identify hidden interdependencies in legacy systems that lack modern export capabilities?

- What automated profiling tools (such as Informatica, Talend, or equivalents) will you deploy to surface anomalies and null value patterns?

- What is your methodology for identifying and excluding ROT data during the discovery phase?

On Validation and Accuracy

Row counts are not validation. Push vendors to prove it:

- What reconciliation techniques do you use beyond row counts, specifically checksums and key financial totals?

- Can you demonstrate automated post-migration validation scripts from a comparable prior engagement?

- What specific accuracy benchmarks (such as 99.99% data parity) do you contractually guarantee in your proposal?

For large-scale financial or healthcare migrations, that last question isn’t optional. Row counts often mask deeper logic errors, record-level discrepancies that only surface weeks after go-live when finance closes the books or compliance runs an audit. To see how those gaps translate into quantifiable business risk, read our breakdown of data migration validation best practices and the true cost of bad data. Closing those gaps early is what separates a clean cutover from a post-migration remediation project.

On Data Ownership and Exit Rights

This is the question most enterprises forget until it’s too late:

- How easy is it to extract our data from your environment? What is the process, and what does it cost?

- What format will our data be returned in: proprietary or open-standard?

Vendor lock-in during a migration is a legal and operational liability. Make data portability a scored criterion, not a footnote.

Key Takeaway: Vendors who cannot provide quantified accuracy guarantees and explicit data exit terms are telling you something important about how they operate under pressure.

Security, Governance, and the Controls Your RFP Must Demand

The security section is where strong data governance requirements separate enterprise-grade vendors from proposal writers with impressive deck design.

Zero-Trust Access Is the Baseline

Every vendor employee who touches your data should operate under a Zero-Trust framework: time-bound, logged, and fully audited access only. No standing permissions. No shared credentials. No access that outlasts the task it was granted for.

Your RFP must also require vendors to demonstrate compliance alignment with applicable standards, including GDPR, HIPAA, SOC 2 Type II, or sector-specific equivalents depending on your industry and geography.

Immutability and Ransomware Resilience

Ransomware actors have shifted tactics. They now routinely target backup repositories first, knowing that destroying recovery capability before encrypting primary systems maximizes leverage.

Your RFP must require vendors to prove their backup infrastructure is both immutable and air-gapped. Specifically, ask for evidence of object-level immutability controls (such as AWS S3 Object Lock or equivalent) that prevent backup modification or deletion even by privileged users.

This is verifiable. Ask for it in writing, not as a checkbox on a compliance questionnaire. Strong governance during a migration isn’t just about protecting data in transit; it’s about maintaining chain-of-custody visibility across every transformation, movement, and access event from day one of discovery through final cutover. Our enterprise data governance framework guide maps those controls directly to RFP requirements that hold vendors contractually accountable. Use it as your baseline before issuing the document.

Key Takeaway: A vendor’s security posture during your migration previews how they’ll handle incidents after it. Zero-trust access and immutable backups aren’t optional for enterprise environments.

Engineering Minimal Downtime: What to Ask For and How to Verify It

Every vendor promises minimal downtime. The question worth asking is: what happens in the 40 minutes after something goes wrong at hour 14?

Vendors who have a real answer give you a step count and a time. Everyone else gives you reassurance, which is not the same thing.

Your RFP’s downtime section should require vendors to answer that question directly, with documented evidence rather than assurances.

Pilot Migrations Are Mandatory

Mandate a pilot migration before any production cutover. Make sure the vendor selects data that reflects the real complexity of your environment. A pilot built on clean, simple tables tells you nothing useful.

A well-designed pilot, one that deliberately includes your most complex tables rather than your cleanest ones, will surface the majority of production-blocking issues before they have a business impact. That’s the point of exercise. Any vendor who resists a pilot is telling you they either haven’t built one or don’t expect it to perform well.

Rollback Procedures Must Be Tested

Ask vendors to submit their rollback plan as a step-by-step executable document, not a conceptual summary. Then ask: When was this procedure last tested in a staging environment comparable to ours?

If the answer is “we’ll test it during the pilot,” that’s not a rollback plan. That’s an aspiration.

The Seamless Cutover Guarantee: What It Should Actually Include

A data migration RFP seamless cutover guarantee isn’t just a line in a service agreement. It should specify:

- The maximum acceptable downtime window (in minutes, not hours)

- The exact trigger conditions that activate rollback

- Who has authority to call the rollback decision

- Documented evidence of the last test run

Blue-Green Deployment for mission-critical systems goes a step further, running two identical production environments and switching traffic instantaneously, which removes the binary choice between “migration complete” and “everything is down.”

Key Takeaway: Downtime guarantees must be backed by tested, documented procedures. Require proof of the last rollback test, not a promise that one exists.

How to Score Vendors Without Bias or Noise

Subjective vendor selection is how expensive mistakes get made. A weighted scoring matrix removes preference from the equation and forces apples-to-apples comparison across proposals.

Recommended Weighting Framework

| Evaluation Category | Weight | What to Probe |

|---|---|---|

| Technical Fit | 30% | Stack compatibility, API robustness, scalability approach |

| Integrations | 25% | HRIS, CRM, and core enterprise system connectivity |

| Security & Compliance | 20% | SOC 2/ISO certifications, encryption, audit trails |

| Experience & References | 15% | Prior migrations of comparable scale and complexity |

| Total Cost of Ownership | 10% | Long-term licensing, support, and data exit costs |

The 0–5 Scoring Scale

Apply a numeric scale to each category across each vendor:

- 0 (Non-compliant): No response or requirement not addressed

- 3 (Meets Requirements): Standard performance; objective is satisfied

- 5 (Exceeds Requirements): Vendor offers measurably superior methodology or demonstrated innovation

This scale prevents the common problem of every vendor scoring “good enough” across every category. Force differentiation, especially in Technical Fit and Security, where the gap between a 3 and a 5 often represents millions in post-migration risk.

A scoring matrix only works if the right vendors are in it. Our data migration strategy guide covers the qualification criteria that help you filter proposals before they reach formal evaluation.

Key Takeaway: A weighted matrix only works if your scoring criteria are defined before proposals are received. Set the rubric first. Score second.

Where to Find Vendors Worth Evaluating

The platform where you post your data migration RFP determines who responds to it. Posting in the right places is as important as what the document says.

Enterprise procurement platforms manage the full RFx lifecycle with built-in audit trails, essential for regulated industries where sourcing decisions must be defensible to compliance and legal stakeholders.

Vendor marketplaces provide vetted IT service provider directories that filter out generalist agencies without migration-specific track records. The signal-to-noise ratio is significantly better than open solicitation.

Consulting partnerships with existing digital transformation leaders accelerate sourcing considerably. These partners often maintain migration accelerators: pre-built tooling and methodology frameworks that reduce both timeline and implementation risk from day one.

Regardless of channel, apply a hard filter: any vendor who cannot provide references from migrations of comparable scale and complexity completed within the last 18 months should not advance to the evaluation stage.

Real-World Proof: What a Structured RFP Process Delivers

A leading U.S.-based waste management company runs a growth strategy built on monthly acquisitions. Each one required rapid data integration into centralized enterprise systems, and their existing process couldn’t keep up.

Every migration cycle took nearly two months, with over 50% of that timeline consumed by manual, Excel-based schema mapping requiring three to four SMEs per cycle. A growing acquisition pipeline was making the approach unsustainable.

Everforth Quinnox replaced the manual workflow with an AI-powered schema mapping accelerator. The solution automated extraction and mapping with confidence scoring, routing only the 10–15% of low-confidence mappings to SMEs for review.

The outcome was immediate. Migration time dropped from two months to three weeks, schema mapping time was cut by 75%, and the company achieved a 2.67x increase in M&A pipeline capacity.

For the full breakdown, read the data integration transformation case study.

Key Takeaway: The bottleneck wasn’t data volume. It was a manual process that couldn’t scale. The right framework doesn’t just accelerate one migration; it removes the constraint from every migration that follows.

The Five-Week Migration Timeline: A Practical Execution Model

Once your vendor is selected, execution follows a structured cycle that keeps accountability clear and prevents the scope drift that kills timelines.

Week 1 – Export: Internal IT teams extract legacy data using agreed-upon tooling and formats documented in the migration specification.

Week 2 – Format: The vendor PM reformats and transforms data according to the mapping documentation developed during discovery.

Week 3 – Sandbox Import: Joint client-vendor teams import data into a non-production environment for rigorous testing against pre-defined validation scripts.

Week 4 – Business Validation: Business leads (not just IT) review data accuracy through sample checks and reconciliation against source system totals.

Week 5 – Production Cutover: Final import and go-live following formal sandbox sign-off from both technical and business stakeholders.

Each week has a deliverable. Each deliverable has an owner. There is no ambiguity about who signs off before the next phase begins. To make sure nothing falls through the gaps across all five stages, download our enterprise data migration checklist before your first kickoff call.

Key Takeaway: A five-week phased timeline with formal stage gates isn’t a constraint. It’s a forcing function that prevents the undefined scope creep that extends migrations from weeks to quarters.

Your Migration Starts Before You Send the First RFP

A well-crafted RFP doesn’t just improve vendor selection. It fundamentally changes how your internal teams understand the migration. The process of answering the questions you’ll later ask vendors forces your organization to define what success looks like.

Teams that finish the RFP process often discover that the document itself is secondary. What they built in the process of writing it, shared definitions, agreed-upon thresholds, a common picture of what ‘done’ looks like, is what actually protects the project.

Before issuing your RFP, work through our enterprise data migration checklist to confirm every dimension of your environment has been accounted for, from source audit to cutover sign-off. It’s the fastest way to find the gaps your vendor’s proposals won’t surface on their own.

At Everforth Quinnox, we’ve supported enterprises across financial services, manufacturing, and healthcare in scoping, governing, and executing complex data migrations, from legacy ERP transitions to multi-cloud consolidation programs.

Our work is powered by Everforth Quinnox AI (QAI) Studio platform, which provides the observability and orchestration layer needed to keep migration workflows transparent and accountable from discovery through cutover. To understand how those capabilities map to real data migration challenges, learn more about how Everforth Quinnox supports enterprise migrations.

Before issuing your RFP, work through our data migration checklist for IT leaders to confirm every dimension of your environment has been accounted for, from source audit to cutover sign-off. It’s the fastest way to find the gaps your vendor proposals won’t surface on their own.

Assistant Manager, Marketing, Everforth Quinnox

FAQ’s Related to Data Migration

A Data Migration RFP is a structured document that defines an organization’s technical requirements and business expectations to solicit comparable proposals from technology vendors.

It is necessary because without one, vendors build proposals on different assumptions; scopes vary wildly, and there is no objective baseline to evaluate responses against. The RFP forces internal teams to define what success looks like before a vendor is involved.

A realistic end-to-end timeline runs approximately 24 weeks: nine weeks for discovery and planning, ten weeks for vendor evaluation and shortlisting, and five weeks for legal review and contract award.

The execution phase that follows runs an additional five weeks, moving from data extraction in Week 1 to production cutover in Week 5, with formal sign-off required at each stage before the next begins.

Provide vendors with a granular view of your data landscape upfront, like volumes, structures, legacy complexity, and known interdependencies. Vague scopes produce defensive pricing or underpriced bids that recover costs through change orders.

Then ask for an itemized breakdown covering setup costs, licensing fees, implementation support, and post-migration hypercare. Bundled pricing makes comparison impossible and hides where the real expenses sit.