AI has moved from hype to hard reality, and nowhere is the gap between ambition and execution been wider than in the world of Proof of Concepts. Across industries, leaders are racing to prove AI’s potential – yet most projects stall long before they deliver measurable business value.

McKinsey’s State of AI 2025 report reveals the uncomfortable truth – fewer than one in three organizations follow the scaling practices required to capture value, and only 1% of AI leaders can claim their systems are mature and embedded across workflows. Most others are still stuck proving what they already know: that AI can work, just not here yet.

This widening gap is creating a new kind of FOMO among enterprises – the fear of being left behind in an AI-driven economy that rewards execution, not experimentation. Those who master the transition from pilot to production are not just innovating; they are building a moat of competitive advantage.

In this blog, we unpack why most AI Proof of Concept fail, where the scaling journey breaks down, and how leading organizations are turning pilot projects into enterprise-wide value engines. We’ll also see real examples and proven steps to make your next AI initiative one that actually delivers.

What Is an AI Proof of Concept

An AI proof of concept is a time–boxed experiment that demonstrates technical feasibility and directional business value for a specific use case. A sound PoC proves that the data is available and fit for the task; the model can achieve a target metric with repeatability, the workflow can ingest and act on the output, and the risks can be governed.

A PoC is not a production system. It is a low-risk rehearsal that should answer go-or-no-go questions about data, model, and operating model within weeks rather than quarters.

Effective PoCs define a clear success metric that maps to a business KPI, for example a two-point lift in conversion, a reduction in false positives, or minutes saved per case. They also define what happens next if the bar is met, including the minimal production pathway, the owners, and the budget to move forward. McKinsey notes that tracking defined KPIs and having a clear road map for adoption are among the practices most correlated with EBIT impact from AI.

Check out this detailed blog on What Is AI Proof of Concept (PoC) & How to Build One

Why AI Proof of Concepts Fail

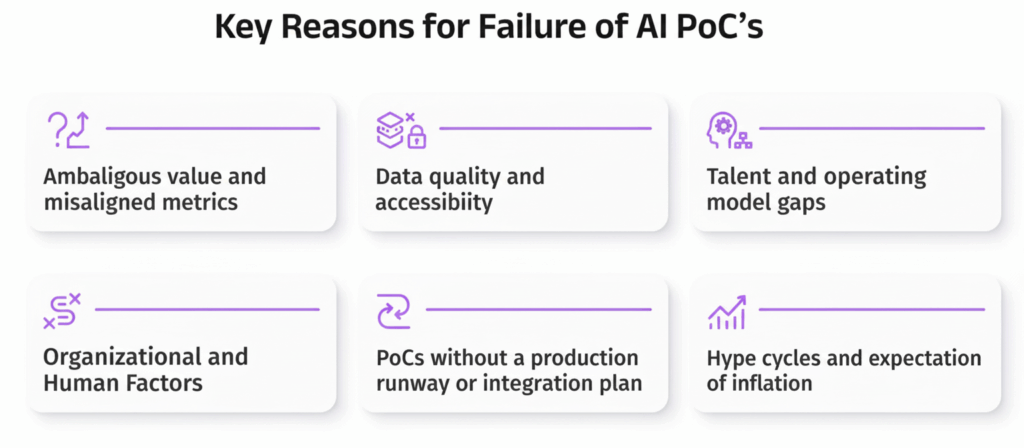

AI Proof of Concepts rarely fail because of model performance; they fail because of organizational fundamentals. Technology often works – it’s the translation of value, scale, and governance that collapses. Let’s unpack why.

1. Ambiguous value and misaligned metrics

Many AI pilots start with technical enthusiasm but lack a clear business goal. Teams measure accuracy or precision but fail to connect those metrics to outcomes that matter – revenue, cost reduction, risk, or customer satisfaction. McKinsey’s survey shows that companies are still early on the practices that connect AI to outcomes, such as KPI tracking and workflow redesign, which suppresses bottom–line impact.

2. Data quality and accessibility

The number one barrier to AI success remains poor data readiness. Models trained on fragmented, biased, or outdated data inevitably fail in production.

According to CIO Research, over 90% of enterprise data is unstructured, residing in emails, documents, and chat logs. Gartner adds that bad data costs U.S. companies over $3 trillion annually due to inefficiencies and decision errors.

3. Talent and operating model gaps

Most organizations have data scientists but lack the supporting ecosystem – MLOps engineers, cloud architects, security experts, and product owners – needed to operationalize AI. The result is a brilliant model trapped in a Jupyter notebook. Without proper deployment of pipelines, monitoring tools, or governance frameworks, even high-performing PoCs never make it into production.

For example, a major retail chain built an AI-based pricing model that worked perfectly in test environments. But without MLOps pipelines for continuous retraining and integration into ERP systems, it stalled after the pilot.

4. PoCs without a production runway or Integration Plan

Gartner estimates that only 54% of AI projects make it past the pilot stage, and less than 15 percent deliver measurable business outcomes at scale. This “pilot trap” happens because PoCs are designed for proof, not performance. They lack deployment planning, governance, or maintenance budgets

5. Hype cycles and expectation of inflation

AI’s reputation for overpromising and underdelivering is rooted in inflated expectations. According to industry research, 41% of executives admitted their organizations invested in AI without clear success metrics, often driven by competitive FOMO.

The result: big spending, small results. Despite billions invested, only a handful of large enterprises – such as JPMorgan, Google, and Unilever – have achieved enterprise-grade AI maturity. They succeed not by chasing hype, but by focusing on disciplined execution, governance, and repeatable value creation.

Don’t let your AI PoC become a statistic. Download our step-by-step AI PoC Strategic Roadmap.

Frameworks, success metrics, and real enterprise case studies – all built so your PoC is designed to scale from day one.

How to Fix AI PoC Failures

Turning around a struggling AI PoC is less about reinventing the model and more about rethinking the method. Success comes from discipline, clarity, and early focus on what truly drives value. Here’s how leading organizations turn pilots into production-ready systems that deliver measurable impact:

Start with a business problem and a measurable KPI:

Tie model metrics to money or risk. Define a target and a confidence interval, then commit to a decision if the bar is met. McKinsey finds that tracking defined KPIs for AI solutions has the strongest link to EBIT impact among the practices it examined

Fix the data first:

Profile the data, document lineage, and close the quality gaps that will invalidate model outputs. Treat unstructured content as a product, with owners, freshness targets, and validation. Programs that addressed knowledge quality up front saw order of magnitude improvements in downstream accuracy.

Design the production pathway on day one:

Specify where the model will run, how it will be invoked, how features will be computed, and how outputs will flow into the workflow or application. Choose a minimal viable production pattern, for example batch scoring into the system of record, before you promise real time. The difference between a demo and a deployment is the path to integration.Invest in MLOps foundations:

Version of everything, data and code, and models. Establish continuous integration and delivery for models, automated tests, canary releases, observability, and retraining triggers. Deloitte’s data on skill gaps explains many stalled programs, so plan for the people as well as the platform.Rewire the workflow, not just the model:

Decide exactly what a human will do with the model output. Create user interfaces that make decisions explainable and auditable. McKinsey’s analysis shows that embedding solutions in processes and redesigning workflows are central to value creation.

Pilot with production data and guardrails:

Use representative data sets with privacy controls, role-based access, and safety checks. Define limits on automatic actions and explicit criteria for human-in-the-loop escalation.

Govern for trust and risk:

Assign accountable owners, adopt model risk policies, and report on fairness, robustness, security, and privacy. CEO or board oversight of AI governance is associated with greater value realization, so elevate governance beyond the data science team.

Budget for the last mile:

Reserve funding for integration, change management, and enablement. A promising model without instrumentation, run books, and training will stall at the handoff.

Related Read: A leader’s guide to bridging AI skills gap

Lessons from Successful AI Proof of Concepts

Success leaves clues. The companies that convert PoCs into value tend to do the following:

They pick up use cases with clear economics:

Fraud reduction, retention uplift, demand forecasting, and maintenance planning have visible ROI and fast feedback loops. Banks that apply agent-based automation to know your customer and financial crime operations report very large productivity gains because many steps can be orchestrated end-to-end with human oversight for exceptions.

They make data a product:

They treat knowledge bases and feature stores like living products, with owners and service levels. By cleaning and structuring the unstructured content that AI depends on, they raise the ceiling on model accuracy and consistency.

They prove value in the workflow:

They integrate the model into the actual system of work, then measure impact in the place where value is created. This is why workflow redesign is a top driver of impact in McKinsey’s research.

They build platforms and reusable patterns:

Rather than one-off pilots, leaders create reusable data pipelines, monitoring, and compliance patterns. As McKinsey notes, thinking beyond individual use cases and creating foundational infrastructure allows new functionality to be deployed faster and cheaper, which compounds the advantage.

They close the talent and ownership gaps:

They seat product managers with data scientists, add MLOps engineers and architects, and give a single leader accountability for adoption and risk. This addresses the skill shortages that keep many pilots from graduating.

They scale deliberately:

They use phased rollouts, track adoption of KPIs, and maintain tight feedback loops into model improvement. McKinsey shows that fewer than one in five organizations track KPIs for gen AI solutions today, which is a clear opportunity to differentiate.

Sign up to Everforth Quinnox AI (QAI) Studio today to get AI POC personalized to your business goals. In addition, get complimentary access to 70+ AI Use Cases here: Everforth Quinnox AI (QAI) Studio – Your One-stop AI Innovation Hub

An AI PoC is more than just a technical experiment—it’s a strategic investment. At QAI Studio, we align every PoC with clear business goals to ensure meaningful outcomes.

– Krishna Kumar, VP, Data & AI, Everforth Quinnox

Conclusion

AI is loaded with promise, but potential alone doesn’t drive results. Many Proofs of Concept stall not because the algorithm fails, but because the business foundation does: ambiguous goals, poor data, missing infrastructure, and no roadmap for production.

Here’s where Everforth Quinnox AI (QAI) Studio steps in. At Everforth Quinnox, our QAI Studio transforms ambitious pilots into operational value by providing rapid prototyping, aligned use-case prioritization, synthetic data tools, and pre-configured AI infrastructure. We don’t just build models – we engineer their success path.

The real value of AI emerges when models drive decisions, workflows shift, and outcomes materialize. With QAI, you’re not just launching a project – you’re rewiring how work gets done, so AI becomes part of your business’s DNA.

So, why wait? Claim your complimentary AI PoC Today and get access to 70+AI Usecases.

FAQs Related to AI PoC Failures

An AI PoC is a small-scale test to validate if an AI solution can deliver real business value before full deployment. It helps assess feasibility, data quality, and ROI potential.

They fail due to unclear goals, poor data quality, limited infrastructure, and a lack of business alignment. Studies show fewer than 30% of AI pilots have ever reached production.

Start with clear success metrics, strong data foundations, and cross-functional teams. Embedding MLOps early and with services like QAI Studio you can ensure faster, scalable results.

Scaling. Moving from a pilot to production requires integration, governance, and performance management – the step where most AI projects stall.

Four to eight weeks is common for a focused use case. The PoC should produce a decision package that includes model results, a production design, expected impact, risk controls, and a go- or no-go recommendation.

Pick a problem with visible economics, reliable data access, and a clear decision point in a workflow. Fraud alerts that reduce losses, retention models that trigger targeted offers, and demand forecasts that drive inventory move the needle quickly..

Expect that many experiments will not progress. Multiple industry analyses indicate that a large share of AI initiatives do not make it to production. What matters is your program discipline, data quality, and whether you invest in MLOps and workflow integration from the start.