Accelerate IT operations with AI-driven Automation

Automation in IT operations enable agility, resilience, and operational excellence, paving the way for organizations to adapt swiftly to changing environments, deliver superior services, and achieve sustainable success in today's dynamic digital landscape.

Driving Innovation with Next-gen Application Management

Next-generation application management fueled by AIOps is revolutionizing how organizations monitor performance, modernize applications, and manage the entire application lifecycle.

AI-powered Analytics: Transforming Data into Actionable Insights

AIOps and analytics foster a culture of continuous improvement by providing organizations with actionable intelligence to optimize workflows, enhance service quality, and align IT operations with business goals.

No enterprise begins its digital transformation journey expecting its testing strategy to be the bottleneck. Yet for many organizations, that’s exactly what happens. Projects stall, defects leak into production, teams burn out, automation produces diminishing returns, and leadership begins to question why despite investing in tools, resources, and frameworks quality remains inconsistent.

The uncomfortable truth is this: most enterprise testing strategies fail not because of lack of effort, but because they are built on assumptions that no longer hold true in the modern enterprise ecosystem.

From legacy architectures to tangled stakeholder structures, from rushed automation initiatives to unrealistic coverage expectations, organizations have a unique mix of constraints that smaller firms simply do not face. This is mostly because enterprise testing is both more critical and more complex and when testing approaches don’t evolve accordingly, the quality program collapses under its own weight.

This blog dives deep into reason enterprise test strategy fail, what makes enterprise testing distinctively difficult, and how organizations can redesign their quality strategy to be resilient, scalable, and aligned with the velocity of modern delivery.

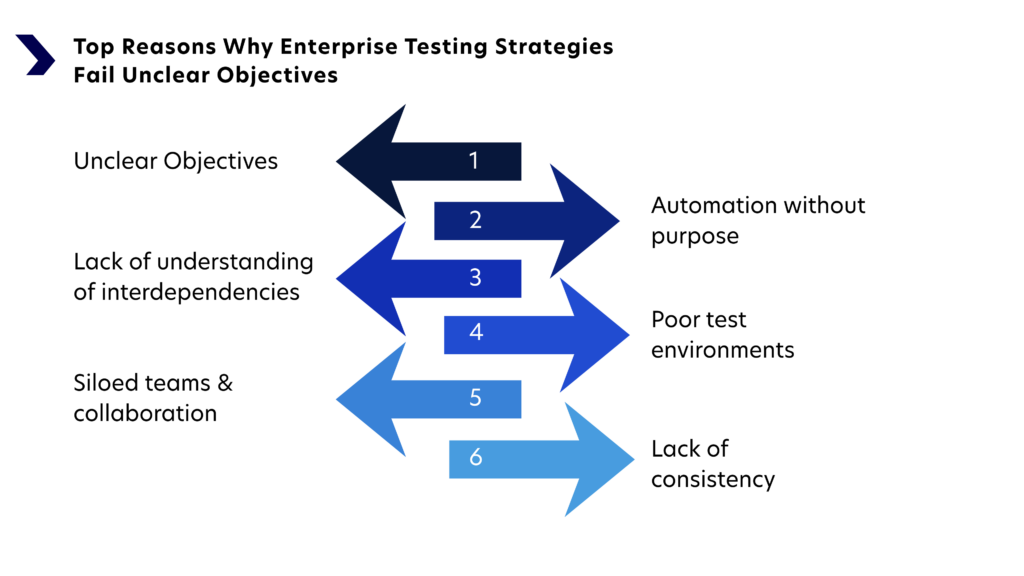

Top Reasons Why Enterprise Test Strategies Fail

If your testing cycles feel longer than they should, automation isn’t delivering the ROI you expected, or production issues keep resurfacing, you’re not alone.

Many enterprises face the same enterprise applications challenges. The surprising part? The reasons behind these failures are more predictable and more preventable than most teams realize.

Let’s break down the key factors:

1. Testing Is Not Fully Aligned With Business Priorities

Most enterprise application testing teams operate in “checklist mode,” executing scripts and completing cycles because the process requires it, not because the outcomes matter. As a result, without explicit alignment to business-critical processes and customer-impact areas, test efforts become scattered. Teams end up over-testing low-risk features and under-testing the workflows that really matter to business continuity.

A test strategy that isn’t tied to business value is simply busywork disguised as quality assurance.

2. Automation Is Implemented Without Clear Purpose

Enterprises often rush into automation expecting it to solve quality bottlenecks. Instead, they end up with:

- brittle UI scripts that constantly break,

- automation covering simple scenarios but ignoring complex ones,

- tools purchased without adequate skills to use them,

- and frameworks that are architecturally inconsistent across teams.

Automation amplifies whatever system it’s introduced into. If the testing process is broken, automation will simply make it fail faster.

3. Inadequate Understanding of Interdependencies

Enterprise applications rarely exist in isolation. They integrate with multiple upstream and downstream systems, data warehouses, identity platforms, and legacy components. When test strategies don’t consider the complexity of these interdependencies, defects slip through integration layers unnoticed.

4. Poor Test Data and Environment Management

Test environments are the silent saboteurs in enterprise QA. Inconsistent configurations, unavailable services, data privacy constraints, and outdated test data lead to flaky results and slow cycles. Teams spend more time fixing environments than actually testing.

5. Siloed Teams and Communication Gaps

Enterprises often separate development, testing, DevOps, infrastructure, and business teams into rigid silos. Each group works toward its own goals, not shared outcomes. This fragmentation widens the gap between application changes, test readiness, and release timelines.

6. Lack of Continuous Testing Adoption

While CI/CD has become the gold standard, continuous testing remains an aspiration rather than a reality in many enterprises. Without shift-left practices, early validation, and rapid feedback loops, testing stays at the tail end of the pipeline, creating bottlenecks and crippling release velocity.

Enterprise Applications – What Makes Testing So Challenging?

Enterprise applications are complex, mission-critical, and deeply embedded into business ecosystems. This means testing them requires more than technical proficiency. It demands domain context, architectural understanding, and operational awareness.

Here are the core factors that elevate the testing challenge:

1. Legacy Systems Mixed with Modern Architecture

Most enterprise systems are not greenfield. They’re layered, patched, upgraded, extended, and intertwined over years – sometimes decades. In such a landscape, you may find:

- mainframe applications talking to cloud-native apps

- ERP systems interacting with microservices

- SaaS systems integrated with custom internal applications

Legacy systems are difficult to test because documentation is often outdated, dependencies are hidden, and modifications can have cascading effects.

2. Complex Business Logic That Cannot Fail

Enterprise applications run critical operations such as billing, logistics, banking transactions, healthcare workflows, government processes. These functions cannot tolerate errors or downtime. Even minor defects can ripple across multiple departments, customer segments, or compliance areas.

Testing such systems requires depth, context, and high accuracy.

3. Highly Interconnected Ecosystems

An enterprise application doesn’t just serve its own purpose; it often connects to:

- third-party vendors

- supply chain partners

- internal data lakes

- identity providers

- external API gateways

Testing these interdependencies requires advanced integration scenarios and robust coordination across teams and failures are common when such complexity is underestimated.

4. Security, Compliance, and Governance Requirements

In industries like finance, healthcare, insurance, or public sector, testing must include:

- auditing requirements

- data protection regulations

- privacy constraints

- traceability

- reproducibility of results

This makes test planning, execution, and documentation far more demanding.

5. High Transaction Volumes and User Loads

Enterprise systems must handle thousands (or millions) of users, transactions, or data flows. Hence, performance testing isn’t a “nice to have”; it’s foundational.

But performance engineering requires specialized skills, realistic environments, and continuous tuning resources many enterprises underinvest in.

6. Multiple Stakeholders With Conflicting Goals

The enterprise ecosystem includes:

- business owners

- IT leadership

- developers

- architects

- operations teams

- security teams

- compliance teams

- external vendors

Each group has its own priorities. Unless the testing strategy bridges these priorities and establishes shared quality objectives, the testing process becomes fragmented and ineffective.

Common Mistakes in Enterprise Testing Strategy Design

Even well-resourced enterprises fall into predictable traps when designing their testing strategy. Here are the enterprise testing strategy mistakes that most commonly lead to long-term failure.

1. Focusing on Coverage Instead of Risk

Trying to “test everything” is the fastest route to testing nothing effectively. Enterprises often design test suites based on exhaustive coverage goals rather than risk-based prioritization. This leads to bloated test suites, redundant scripts, and wasted effort.

2. Automating the Wrong Things

Automation becomes a liability when:

- teams automate unstable areas,

- test data changes frequently,

- UI layers are brittle,

- or business logic evolves rapidly.

Organizations often measure automation by script count instead of automation ROI, which leads to high maintenance cost and low actual value.

3. Creating Monolithic Test Suites

Large, tightly coupled test suites slow down feedback cycles and break often. When test cases depend on rigid flows, a small change can invalidate dozens of scripts. Enterprises need modular, atomic, maintainable test assets not monolithic structures.

4. Underestimating the Importance of Test Data

Many defects in enterprise systems are data-driven. Yet test data provisioning is often an afterthought. Without high-quality test data, even the most sophisticated strategy collapses.

5. Treating QA as a Phase Instead of a Practice

Testing is still treated as a gatekeeping function in many organizations. But modern enterprise delivery demands testing be integrated into:

- requirements discussions,

- architecture decisions,

- CI/CD pipelines,

- and production monitoring.

A phase-based approach cannot keep pace with agile and DevOps delivery.

6. Lack of Engineering Mindset in QA

Modern testing requires a blend of engineering, domain knowledge, and analytical skills. When QA teams lack architectural awareness, coding skills, or automation engineering capabilities, they struggle to design effective strategies.

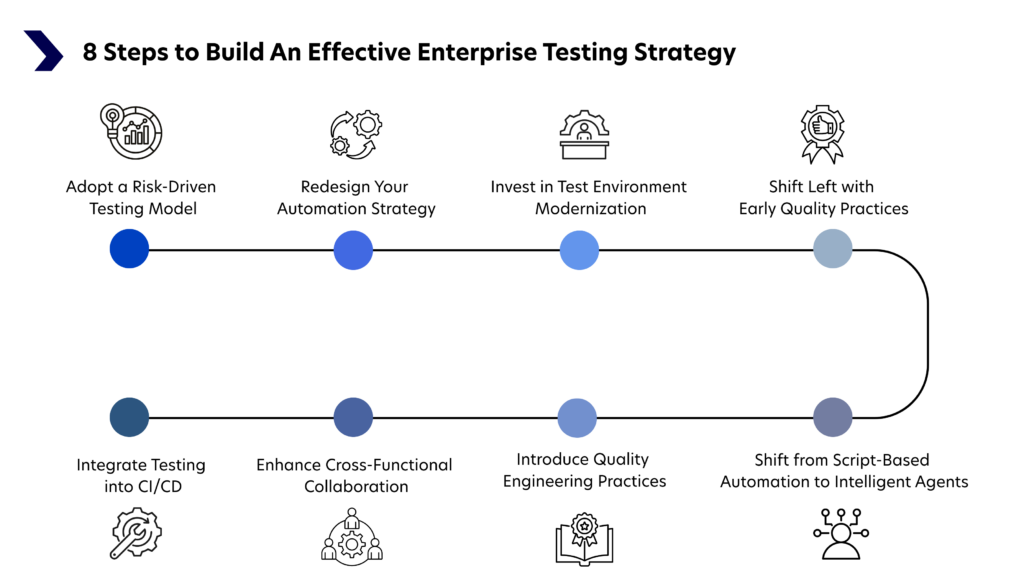

How to Fix a Failing Enterprise Testing Strategy

Turning around a failing test strategy requires a deliberate mix of cultural, architectural, and operational improvements.

As “In complex enterprise ecosystems, testing must evolve from a validation checkpoint to an intelligence-driven discipline that predicts, prevents, and protects business value.”

Here’s how enterprises can rebuild a more effective approach.

1. Adopt a Risk-Driven Testing Model

Shift from coverage metrics to risk indicators. Classify components based on:

- business criticality,

- change frequency,

- integration complexity,

- and historical defect density.

This ensures testing aligns with what matters most.

2. Redesign Your Automation Strategy

Automation needs governance, not enthusiasm. Build a pragmatic automation roadmap that includes:

- clear automation selection criteria,

- architectural guidelines for test frameworks,

- tagging and traceability models,

- and ROI-driven prioritization.

Focus on API-level and service-level automation where stability is higher.

3. Invest in Test Environment Modernization

Stable, production-like environments are foundational. Enterprises should implement:

- environment-as-a-service,

- containerized test environments,

- synthetic data generation,

- and automated data refresh pipelines.

Environment stability translates directly to testing reliability.

4. Shift Left with Early Quality Practices

Introduce quality earlier through:

- static code analysis,

- unit and component-level testing,

- contract testing for APIs,

- and architecture quality checks.

The earlier defects are caught, the cheaper they are to fix.

5. Integrate Testing into CI/CD

Continuous testing isn’t optional anymore. Integrate:

- smoke tests,

- service-level tests,

- component-level tests,

- and fast regression suites

into CI pipelines, while reserving full end-to-end regressions for controlled environments.

6. Enhance Cross-Functional Collaboration

Testing is not the QA team’s responsibility alone. Build collaborative models involving:

- product owners for acceptance criteria clarity,

- developers for testability improvements,

- DevOps for environment automation,

- and business teams for realistic workflow validation.

Quality becomes a shared responsibility rather than an isolated function.

7. Introduce Quality Engineering Practices

Move toward a QE model emphasizing:

- code-first testing approaches,

- testing as code review criteria,

- observability for defect prediction,

- and proactive performance engineering.

Quality engineering elevates testing from validation to prevention.

8. Shift from Script-Based Automation to Intelligent Agents

Traditional automation follows predefined instructions. AI testing agents, however, behave more like decision-makers. They can:

- Analyze code changes and predict impact areas

- Dynamically generate or update test cases

- Adapt to UI modifications using self-healing logic

- Identify patterns in failures

- Continuously learn from past execution data

This transition reduces maintenance overhead and transforms testing from static execution to dynamic optimization.

Creating a Resilient and Scalable Enterprise Test Strategy

Building a long-lasting, scalable testing strategy requires forward-thinking design tailored specifically for enterprise complexity.

1. Architect for Modularity

Design test suites the way good software is designed—modular, reusable, loosely coupled. This reduces maintenance cost and improves resilience to change.

2. Establish a Unified Test Governance Model

Governance should provide:

- standards for test design,

- guidelines for automation architecture,

- clear roles and responsibilities,

- metrics tied to business outcomes (not vanity metrics).

Strong governance keeps testing consistent across teams and projects.

3. Build a Test Data Strategy That Scales

A sustainable test data strategy includes:

- synthetic and masked data generation,

- automated provisioning pipelines,

- self-service tools for testers,

- clear compliance controls.

This ensures data availability never slows down testing.

4. Implement Observability-Driven Testing

Use production telemetry to inform testing priorities. Observability data helps teams:

- identify real-world usage patterns,

- target high-value scenarios,

- and predict where failures are most likely.

Testing becomes smarter, not just broader.

5. Balance Human Insight with Automation Efficiency

Benefits of automation testing are many, but human intuition is invaluable. Balance:

- exploratory testing for unknowns,

- automated regression for knowns,

- and user-journey testing for validation.

A blended approach provides wider and deeper coverage.

6. Continuously Evolve the Strategy

A test strategy is not a static document – it’s a living practice. Conduct quarterly reviews of:

- metrics,

- bottlenecks,

- tooling effectiveness,

- and team capabilities.

Adaptive strategies survive; static ones fail.

Conclusion

Enterprise testing strategies fail for many reasons – misaligned priorities, outdated methods, brittle automation, data challenges, and the overwhelming complexity of modern enterprise systems. But failure is not inevitable.

By embracing risk-driven testing, engineering-first quality practices, intelligent automation, modular test architectures, and strong governance, enterprises can transform testing from a bottleneck into a competitive advantage.

A resilient and scalable enterprise testing strategy isn’t about testing more – it’s about testing smarter, earlier, and with deeper insight into the business systems that drive value.

This is where Quinnox’s AI-powered software testing solutions make a measurable difference. By combining AI-driven test intelligence, predictive analytics, adaptive automation frameworks, and deep enterprise system expertise, Quinnox helps organizations modernize legacy QA models into intelligent quality ecosystems.

Connect with our experts today and transform your enterprise testing strategy into a strategic enabler of digital success.

Assistant Manager, Marketing, Quinnox

FAQ About Enterprise Testing Strategy

Enterprise testing often fails not because of insufficient funding, but because of misaligned strategy. Organizations tend to invest heavily in tools and automation without modernizing processes, governance, or team collaboration models. Siloed teams, outdated frameworks, bloated regression suites, and unclear quality metrics dilute the impact of even large budgets. Without risk-based prioritization and strong alignment to business outcomes, spending increases but effectiveness does not.

Enterprise systems are deeply interconnected, which makes testing far more complex than it appears. Hidden challenges include cross-system integrations, unstable test environments, inconsistent test data, role-based access variations, and compliance constraints. Small changes in one module can affect multiple downstream systems, making impact analysis difficult and increasing the risk of unexpected failures in production.

Reducing QA failures requires a shift from reactive testing to proactive quality engineering. This includes adopting risk-based testing, embedding validation earlier in the development lifecycle, stabilizing automation frameworks, improving test data governance, and using analytics to identify high-risk areas. Continuous monitoring and measurable release readiness criteria also help prevent defects from escaping into production.

Improving a failing strategy begins with a structured assessment of gaps in coverage, automation stability, and process efficiency. Enterprises should redefine quality KPIs aligned with business priorities, optimize regression suites to eliminate redundancy, integrate testing into CI/CD pipelines, and introduce intelligent automation for smarter prioritization and defect analysis. Sustainable improvement comes from combining governance, data-driven insights, and cross-functional collaboration.