Accelerate IT operations with AI-driven Automation

Automation in IT operations enable agility, resilience, and operational excellence, paving the way for organizations to adapt swiftly to changing environments, deliver superior services, and achieve sustainable success in today's dynamic digital landscape.

Driving Innovation with Next-gen Application Management

Next-generation application management fueled by AIOps is revolutionizing how organizations monitor performance, modernize applications, and manage the entire application lifecycle.

AI-powered Analytics: Transforming Data into Actionable Insights

AIOps and analytics foster a culture of continuous improvement by providing organizations with actionable intelligence to optimize workflows, enhance service quality, and align IT operations with business goals.

The world of software development is evolving at an unprecedented pace, and with it, the demands on quality assurance (QA) are growing more complex than ever. Traditional QA practices, often reliant on manual testing and rigid automation scripts, struggle to keep up with rapid release cycles, diverse platforms, and increasingly intricate user scenarios. These limitations lead to slower feedback, missed defects, and ultimately, a gap in software quality that can affect user experience and business outcomes.

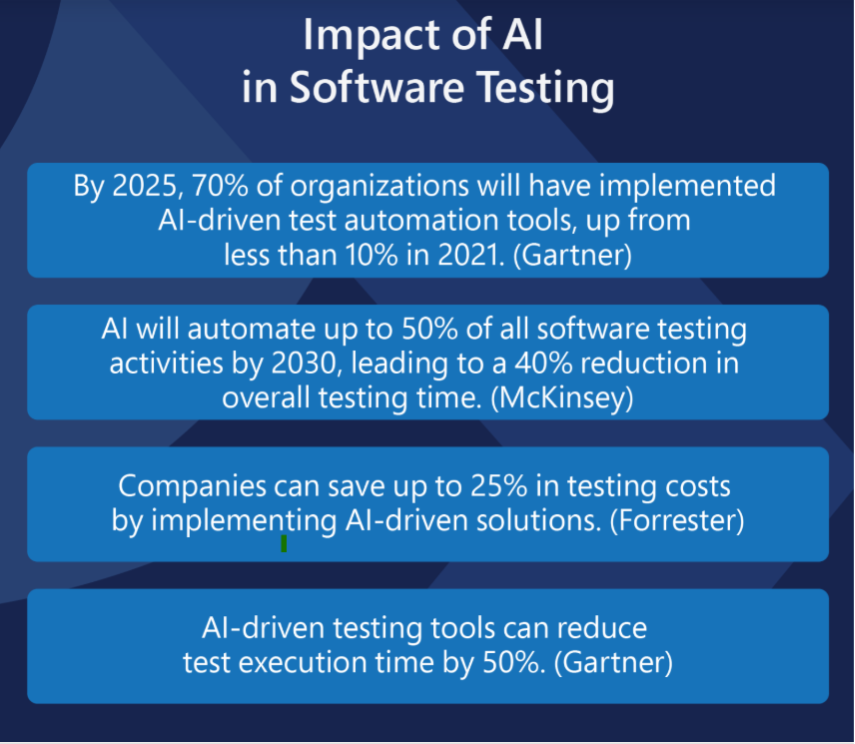

Now, a new era is dawning with the rise of AI testing agents – autonomous, intelligent systems designed to revolutionize how software is tested. A recent McKinsey study shows that an overwhelming 92% of businesses plan to boost their investment in generative AI over the next three years – setting the stage for widespread adoption of AI agents capable of autonomous decision-making across industries. By enhancing test coverage, accelerating execution, and enabling adaptive learning from previous runs, AI testing agents are not just automating tasks – they’re fundamentally redefining what it means to ensure software quality in a fast-moving digital world.

In this blog, let us explore further, how AI testing in QA are reshaping the future of software testing and why embracing AI agents is the way forward.

What Is an AI Testing Agent?

An AI agent is a piece of software that can act independently to carry out tasks by observing its surroundings, making decisions, and learning from its experiences. Instead of just following strict instructions, it adjusts its behavior based on what it senses and the goals it needs to achieve.

You can think of it as a kind of digital assistant that can handle complexity and unexpected situations on its own.

Key Features of AI Agents

- Autonomy: They work without needing constant input from people. Once set up, they can make choices and take action by themselves.

- Perception: AI agents gather information from their environment through sensors or data inputs to understand what’s happening around them.

- Decision-Making: Using the data they collect, these agents analyze options and choose the best course of action toward reaching their objectives.

- Learning: Over time, they improve by recognizing patterns and adapting based on past outcomes, becoming more effective with experience.

- Goal-Oriented: They are designed to focus on achieving specific tasks or solving particular problems.

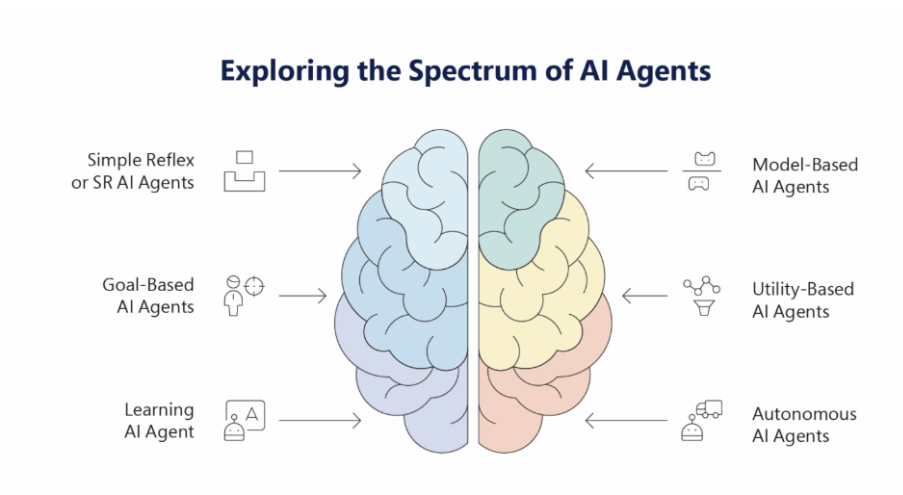

Types of AI Agents

- Simple Reflex Agents: These respond directly to specific inputs with predefined actions. They don’t consider the history of what happened before, just the current situation.

- Model-Based Agents: These keep track of the world’s state, including past events, to make better decisions. They have an internal representation of their environment.

- Goal-Based Agents: These plan their actions by thinking about how to achieve a certain goal, often weighing different options before deciding.

- Utility-Based Agents: They go beyond just achieving goals; they evaluate how good each possible outcome is and aim to maximize their “happiness” or effectiveness.

- Learning Agents: These agents improve their behavior over time by learning from experience, adjusting their strategies based on feedback and new information.

How Do AI Testing Agents Work?

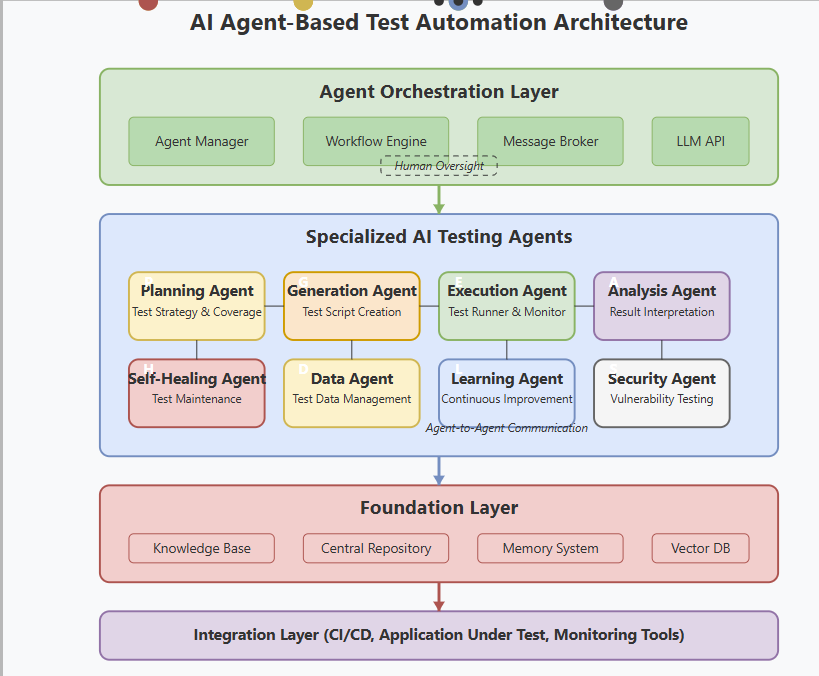

AI testing agents function by observing the software they need to test, understanding how it behaves, and then deciding what actions to take to check if everything is working properly. Instead of relying on fixed test scripts, these agents explore different parts of the software on their own.

Here’s a clear step-by-step breakdown:

- Input Gathering: At the first stage, the AI agent pulls in data from sources like requirements docs, code repositories, or past test results. Using NLP, it “reads” natural language to understand what the software should do.

- Test Generation: Next based on the output needed AI creates test scenarios automatically. For instance, ML algorithms start to predict potential failure points based on historical data, generating thousands of variations in minutes.

- Execution and Monitoring: The agent starts to run tests across environments – web, mobile, or APIs while watching for probable breakdowns. Tools with computer vision can even test UI elements by “scanning” the screens.

- Analysis and Reporting: After each testing cycle, the agent updates its knowledge base with insights from functional failures, performance bottlenecks, security vulnerabilities, and accessibility issues, making it smarter for future comprehensive testing.

- Adaptive Learning: If code changes break a test, the agent updates it automatically, keeping your pipeline flowing.

Further, this process grows into broader trends like self-healing scripts and predictive coverage. For enterprise leaders, these agents can help fill the gap with CI/CD tools like Jenkins or GitHub Actions, making them essential for DevOps teams handling AI-infused software.

AI Agent vs. AI-Assisted Testing Workflows

When it comes to modern software testing, the terms AI Agent and AI-Assisted Testing often come up but they’re not quite the same. Understanding the difference between fully autonomous AI agents and tools that simply assist human testers can help teams choose the right approach for their needs.

Let’s take a closer look at how these two methods compare and what each brings to the table.

| Aspect | AI Agent | AI-Assisted |

|---|---|---|

| Level of Autonomy | Fully autonomous; executes end-to-end testing workflows without human intervention | Human-led with AI providing recommendations, automation, and assistance |

| Decision-Making | Learns patterns, makes decisions, and adapts test strategies in real time | Relies on human testers to make final decisions and define strategy |

| Test Creation | Automatically generates, updates, and prioritizes test cases based on system changes and historical data | Suggests test cases, accelerates script creation, and flags potential gaps |

| Execution | Runs tests continuously, self-schedules based on risk or system changes | Executes only when triggered by human testers or CI/CD pipelines |

| Maintenance | Self-healing: adjusts tests automatically when system changes occur | AI highlights broken tests, but humans must validate and update |

| Error Handling | Identifies, classifies, and resolves issues autonomously (with escalation if needed) | Detects anomalies but requires human input to resolve |

| Compliance & Documentation | Generates audit-ready reports automatically; ensures alignment with standards | Assists with documentation but still requires manual validation |

| Human Role | Supervisory—humans review insights and handle edge cases | Active—humans guide, approve, and interpret results |

| Scalability | Scales testing efforts dynamically with minimal human overhead | Scaling depends on human tester availability and oversight |

| Best Use Case | Continuous, large-scale, dynamic environments needing minimal human touch | Teams seeking speed and efficiency but retaining control over decisions |

Where AI Agents Fit in the Software Testing Lifecycle

The software testing lifecycle is a structured journey designed to validate that software behaves as expected and meets quality standards. AI agents are gradually becoming integral partners in this journey, enhancing efficiency, precision, and adaptability at various stages.

Development Phase QA

- Code quality analysis: To review commits for potential functional, security, and performance issues.

- Unit and integration test generation: It helps to create detailed test coverage for new code.

- Accessibility-first development: Validates that new UI component meets accessibility standards.

- Security code scanning: Identify vulnerabilities as they’re introduced, in time.

Pre-Integration QA

- Component testing: Validates individual features across functional and non-functional requirements

- Database integrity testing: Ensures data consistency and validation rules

- UI component testing: Verifies interface elements so that they work correctly across different states

- Performance baseline testing: Sets benchmarks for acceptable response times

Integration and System QA

- End-to-end workflow testing: Validates complete user journeys across the entire system

- Cross-browser and device testing: Ensures consistent experience across platforms

- Load and stress testing: Verifies system behavior under different usage patterns

- Security penetration testing: Identifies vulnerabilities in integrated systems and takes needed measures(or you can set the needed measures)

- Accessibility compliance testing: Validates WCAG conformance across complete workflows

Pre-Production QA

- Production-like environment validation: Helps under comprehensive testing under realistic conditions.

- Disaster recovery testing: Validates system resilience and recovery procedures

- Compliance and audit preparation: Ensures that the regulatory requirements are met.

- User acceptance testing support: Validates business requirements and user experience.

Production QA and Monitoring

- Continuous monitoring: Helps to watch for functional, performance, and security anomalies.

- Real user monitoring: Analyzes actual user behavior and helps identify issues proactively.

- Automated regression testing: Ensures new deployments don’t break existing functionality.

- Performance degradation detection: Identifies and alerts on system slowdowns.

5 Core Benefits of Using AI Testing Agents

In the evolving world of software development, AI testing agents are reshaping how quality assurance is approached. Far beyond simple automation, these intelligent agents bring a level of adaptability and insight that transforms the entire testing process. Here are five fundamental advantages that testing AI agents offer:

1. Proactive Issue Identification

Unlike traditional testing, which often reacts to predefined scenarios, AI testing agents analyze application behavior continuously. This proactive stance allows them to detect anomalies and potential issues even before they manifest as errors, reducing the risk of costly surprises in production.

2. Accelerated Test Coverage

AI agents excel at exploring complex systems rapidly, identifying paths and edge cases that might elude manual testers. This breadth of coverage ensures that the software is scrutinized from multiple angles, enhancing confidence that the product can handle real-world scenarios.

3. Dynamic Adaptability

Software environments are in constant flux, with frequent updates and integrations. AI testing agents adapt on the fly, learning from changes and adjusting their test strategies accordingly. This reduces the maintenance burden typically associated with static test scripts, keeping testing relevant without manual rewrites.

4. Enhanced Efficiency and Resource Optimization

By automating repetitive and time-consuming tasks, AI testing agents free human testers to focus on strategic analysis and exploratory testing. This shift not only speeds up release cycles but also optimizes team productivity and resource allocation.

5. Data-Driven Insights for Continuous Improvement

Beyond executing tests, AI agents generate rich data on performance trends, failure patterns, and user interactions. These insights empower development teams to make informed decisions, prioritize fixes effectively, and continuously elevate software quality.

How to Use AI Testing Agents Effectively (Tips and Strategies)

Using AI testing agents effectively means weaving their capabilities into the fabric of your development process with intention and care. When balanced with human insight, these agents can transform testing into a smarter, more agile function – one that not only catches bugs but anticipates them.

Here are some practical approaches to get the most from AI testing agents:

1. Define Clear Objectives and Boundaries

Begin by outlining what success looks like for your testing efforts. AI agents thrive when given specific goals whether it’s reducing test cycle time, improving coverage, or catching elusive bugs early. Establishing boundaries helps these agents focus on meaningful tasks, avoiding wasted cycles on irrelevant or redundant checks.

2. Integrate AI Agents into Existing Workflows

Rather than replacing established processes outright, AI testing agents should augment them. Seamless integration with current tools and pipelines ensures that AI-generated insights complement human expertise, enabling faster feedback loops without disrupting the team’s rhythm.

3. Continuously Train and Update Agents

The strength of AI lies in learning from data, so feeding agents with recent test results, application updates, and user behavior patterns is essential. This ongoing training enables them to evolve alongside the software, maintaining accuracy and relevance even as the codebase shifts.

4. Combine AI Insights with Human Judgment

AI agents excel at pattern recognition and volume, but they lack contextual nuance. Encourage testers to review AI findings critically, using their experience to validate issues and interpret subtle signs that might indicate deeper problems. This collaboration elevates the quality of defect detection and prioritization.

5. Prioritize Test Scenarios with Business Impact

Not all tests carry equal weight. Use AI to identify high-risk areas such as features with frequent changes or critical business functions and prioritize those for deeper scrutiny. This targeted approach maximizes value by aligning testing efforts with what matters most to users and stakeholders.

6. Monitor and Measure AI Performance Regularly

Implement metrics such as defect detection rates, false positives, and time saved to track how well AI agents are performing. Such periodic reviews help uncover blind spots or inefficiencies, allowing continuous refinement of AI strategies.

7. Foster a Culture Open to AI-Driven Change

Successful adoption depends as much on people as on technology. Hence, make sure to encourage curiosity and experimentation within your team, creating an environment where testers see AI agents as collaborators rather than competitors. Training and transparent communication are key to building trust.

Common Challenges and How to Overcome Them

AI agents hold immense promise for transforming workflows, yet integrating them effectively is not without obstacles. Organizations frequently encounter challenges that can limit the impact of these intelligent tools if not addressed proactively. Understanding these hurdles and adopting practical strategies to navigate them is essential for unlocking AI’s full potential.

Challenge 1: "The AI Finds Too Many Issues across Too Many Areas"

Comprehensive AI testing can discover hundreds of issues across functional, performance, security, and accessibility domains, overwhelming teams.

Solution: Implement risk-based prioritization. Configure agents to focus first on issues that impact core business functions, security compliance requirements, or legal accessibility obligations.

Challenge 2: "Different QA Disciplines Have Conflicting Requirements"

Performance optimizations might affect accessibility, security measures might affect usability, and functional changes might break existing tests.

Solution: Use AI agents’ cross-disciplinary analysis capabilities. Modern agents can identify these conflicts and suggest balanced solutions that maintain quality across all dimensions.

Challenge 3: "Our Team Lacks Expertise to Validate AI Results in All Areas"

QA teams might be strong in functional testing but lack deep knowledge in security assessment or accessibility compliance to validate AI findings.

Solution: Partner with AI agents for education. Many 2025 platforms provide detailed explanations of why issues were flagged, helping teams learn while validating results.

Challenge 4: "Integration with Specialized Tools Is Complex"

A comprehensive strategy for QA often requires multiple specialized tools that don’t integrate well with each other or with AI agents.

Solution: Prioritize AI agents with broad integration capabilities or those that provide built-in functionality across multiple QA disciplines, reducing tool sprawl.

Challenge 5: "Maintaining Quality Standards across All Disciplines Is Overwhelming"

Keeping up with evolving standards in accessibility (WCAG updates), security (new vulnerability types), performance (changing user expectations), and functionality (business requirement changes) is difficult.

Solution: Choose AI agents that automatically update their knowledge bases with current standards and best practices across all QA disciplines.

Future of AI Agents in Software QA

The rapid evolution of software development methodologies, the explosion of data, and the increasing complexity of applications have pushed traditional QA methods to their limits. AI agents are emerging as transformative enablers, poised to reshape how quality is assured—making testing smarter, faster, and more aligned with business goals.

At the forefront of this transformation is Qyrus, our end-to-end AI-powered test automation platform that stands out as a visionary enabler in this evolving space. It combines AI-driven test automation with intelligent analytics, providing a unified solution that bridges the gap between manual testing, automation, and AI-powered insights.

Ready to elevate your testing to the next level? Explore our software testing solutions and experience the future of quality assurance today.

FAQs About AI Testing Agents

AI agents cover functional, performance, security, accessibility, UI, and integration testing—across workflows, APIs, databases, and user interfaces.

They’re trained across disciplines—functional, performance, security, and accessibility—bringing expertise that would normally require multiple specialists.

No. They augment QA teams by automating repetitive testing and surfacing insights. Human experts still guide strategy, validate complex business logic, and assess UX.

Agents use risk-based prioritization, weighing business impact, compliance, user experience, and security. They adapt to industry needs—for example, accessibility in healthcare or performance in consumer apps.

Most teams are productive in 2–4 weeks with functional testing. Full cross-discipline adoption (security, accessibility, performance) typically takes 2–3 months.

They apply unified frameworks and shared validation rules, ensuring improvements in one area (like performance) don’t break another (like accessibility or security).