Modern software delivery no longer follows a predictable path from requirements to release. Applications evolve continuously, user interfaces change frequently, APIs are constantly versioned, and cloud environments scale dynamically to meet demand. In this reality, quality assurance cannot function as a final checkpoint in the delivery pipeline – it must operate as a continuous capability that keeps pace with modern development speed.

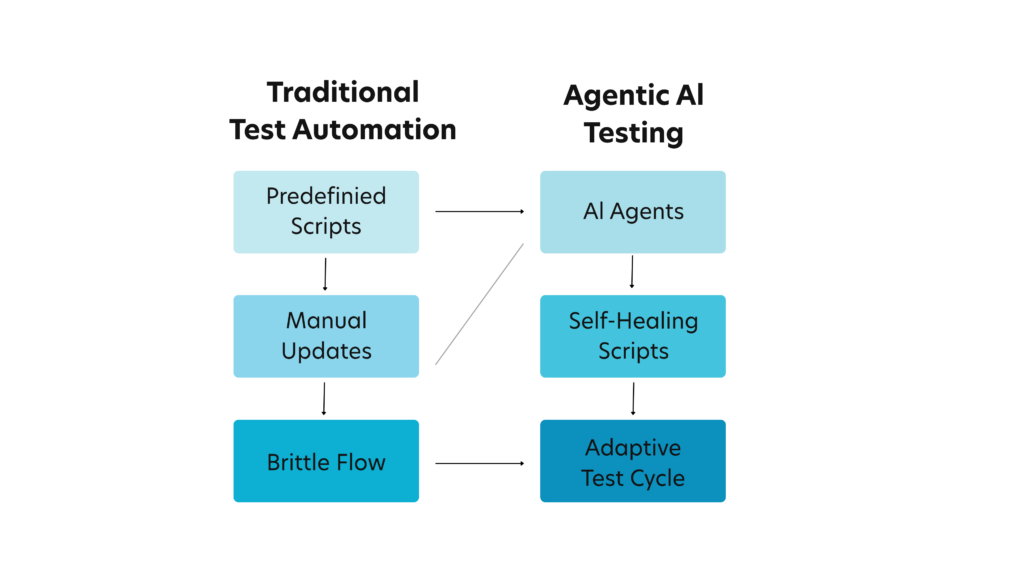

Amidst this backdrop, traditional testing approaches, built around manual effort and brittle automation scripts, struggle to keep up with this level of change. Even well-designed automation frameworks often require significant maintenance as interfaces shift; workflows evolve, and underlying services are updated. As a result, many organizations find that automation intended to accelerate delivery ends up consuming substantial time and effort simply to remain functional.

This is where Agentic AI Testing is emerging as a transformative approach. Instead of relying solely on predefined scripts, agentic systems introduce autonomous AI agents that can understand testing goals, generate and execute validation flows, adapt to application changes, and continuously improve testing outcomes. These agents behave less like static automation tools and more like intelligent digital testers that reason about user intent and validate whether systems continue to deliver the expected experience.

In practical terms, agentic agents can navigate applications, interact with APIs, validate business workflows, and adjust their execution paths when the application evolves. This capability enables testing systems that are adaptive, context-aware, and capable of expanding coverage over time rather than remaining fixed to a static set of scripts.

This article explores what Agentic AI Testing means in practice, how it differs from traditional automation and AI-assisted testing, where it fits within a modern QA strategy, and which industry use cases are already demonstrating tangible business value.

What Is Agentic AI Testing?

At its core, Agentic AI Testing is quality assurance powered by AI agents that can plan and act. A traditional automated test follows a predefined script: click this element, enter this value, verify that label. When the application behaves exactly as expected, the test succeeds. When anything in the workflow changes, even slightly, the test often fails.

This rigidity creates a paradox. Automation is intended to increase efficiency, yet in many organizations, maintaining automation frameworks consumes a disproportionate amount of testing effort. Agentic AI Testing approaches the problem differently. Instead of defining every action, teams specify the intent of the test – the outcome that must be validated.

For Instance, you provide a goal – “a user should be able to register, verify email, log in, and complete checkout”- and an agentic testing system interprets this intent and determines how to execute the validation. It navigates the interface, interacts with services, verifies outputs, and adjusts its approach if elements change.

Because agents are context-aware, they can recover from common disruptions that break classic automation. If a button label changes, a field moves, or a UI component is re-rendered, an agent can search for the best match, evaluate page structure, and continue. In many implementations, agents also capture what changed and update the test logic, creating self-healing behaviors that reduce maintenance.

Importantly, agentic testing is not about replacing QA teams. It is about augmenting them – delegating repetitive work, shrinking feedback loops, and helping testers focus on strategy, edge cases, and business risk.

Don’t miss out this exclusive read on Why Agentic AI Is the Future of IT Operations

A Comparison Look: Agentic AI Testing vs. Traditional Automation and AI-Assisted Testing

| Dimension | Traditional Test Automation | AI-Assisted Testing | Agentic AI Testing |

|---|---|---|---|

| Core Approach | Script-driven automation that executes predefined steps and validations. | AI enhances parts of the testing process such as test generation, element detection, or data creation. | Autonomous AI agents plan, execute, adapt, and improve tests based on defined business intent. |

| Test Design Model | Humans manually design test scripts and workflows. | AI may help generate test cases, but humans still define most scenarios. | Agents generate and evolve test scenarios dynamically based on goals, application behavior, and historical data. |

| Level of Autonomy | No autonomy. Tests strictly follow predefined instructions. | Limited autonomy. AI assists specific tasks but execution and orchestration remain human-driven. | High autonomy. Agents can decide how to execute workflows, adjust plans, and complete validations independently. |

| Adaptability to Application Changes | Low adaptability. Even minor UI or workflow changes can break scripts. | Moderate adaptability. AI may improve element recognition or locator stability. | High adaptability. Agents analyze context, identify alternative paths, and continue execution even when workflows evolve. |

| Maintenance Effort | High. Frequent script updates required when applications change. | Medium. AI reduces some maintenance but still relies heavily on human intervention. | Low. Self-healing and contextual reasoning reduce script maintenance significantly. |

| Test Coverage Expansion | Limited by manual script creation and maintenance effort. | Improved coverage through AI-generated suggestions and data variations. | Dynamic coverage expansion as agents explore workflows, edge cases, and alternate user paths autonomously. |

| Handling Complex Workflows | Challenging to maintain long, multi-system test flows. | AI can assist but orchestration complexity remains high. | Designed for complex enterprise workflows spanning multiple systems, APIs, and services. |

| Execution Intelligence | Executes predefined steps without interpreting outcomes beyond assertions. | Provides insights and analytics but limited decision-making during execution. | Continuously evaluates outcomes, adjusts execution paths, and learns from failures and successes. |

| Failure Analysis | Failures require manual investigation by QA teams. | AI may assist in log analysis or root-cause suggestions. | Agents cluster failures, highlight likely root causes, and recommend remediation paths. |

| Scalability for Modern DevOps | Difficult to scale due to maintenance overhead and brittle scripts. | Better scalability than traditional automation but still dependent on manual oversight. | Highly scalable as agents adapt automatically and integrate seamlessly into CI/CD pipelines. |

| Role of QA Engineers | Focus heavily on writing and maintaining scripts. | Balance between managing automation and validating AI-generated outputs. | Focus shifts toward strategy, risk analysis, and high-value exploratory testing. |

The progression from traditional automation to agentic testing reflects a broader industry shift – from execution-focused automation to intelligent, adaptive quality systems capable of evolving alongside modern applications.

How Agentic AI Testing Operates Across the Testing Lifecycle

To appreciate the operational value of agentic testing, it is useful to examine how these systems participate across different phases of the testing lifecycle.

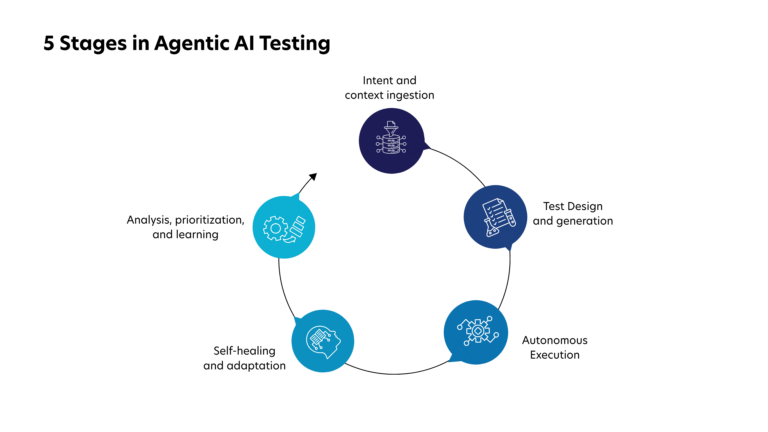

1) Intent and context ingestion

Agentic systems begin by ingesting contextual information about the application and its intended behavior. Input can include user stories, acceptance criteria, UI flows, API specs, production analytics, and defect history. By analyzing these signals together, agents gain a deeper understanding of which workflows are business-critical and where risk is most likely to appear.

This context-driven approach addresses a common gap in traditional testing. According to the ResearchGate Report, nearly 60% of defects that reach production are linked to incomplete or poorly interpreted requirements. By directly interpreting the requirement context, agentic systems help ensure that testing aligns more closely with real business outcomes.

2) Test design and generation

Based on goals, agents propose test scenarios. These scenarios can include happy paths, negative paths, boundary cases, role-based access checks, and data validation. Because agents analyze historical test runs and defect patterns, they can also suggest new scenarios that human testers might not immediately identify.

AI-driven test generation significantly improves coverage. Industry research indicates that organizations adopting AI-assisted test design have seen up to a 35% increase in functional test coverage without proportional growth in testing effort. The result is broader and more meaningful validation of application behavior.

3) Autonomous execution across layers

Agentic testing is not limited to UI, it goes across multiple layers of the technology stack. Agents can drive UI workflows, call APIs, validate responses, check logs, query test databases, and correlate results across services. This cross-layer visibility allows the system to confirm that complete business workflows function correctly – not just individual screens or endpoints.

This capability is particularly important in modern architectures. According to the Gartner, over 70% of enterprise applications now rely on microservices or distributed architectures, increasing the need for integration-level testing across services.

4) Self-healing and adaptation

When an element changes or a step fails due to a minor UI update, agentic systems address this through self-healing capabilities – they analyze the application structure to identify alternative elements or execution paths. Instead of stopping the test immediately, they adapt and continue validation. search for alternatives, retry with updated selectors, and continue. They also store what they learned, so the next run is more stable.

5) Analysis, prioritization, and learning

The final phase of the lifecycle focuses on extracting insights from test execution. Rather than simply reporting pass or fail results, agentic systems summarize failures, cluster similar issues, and highlight likely root causes. Over time, they learn which tests are flaky, which scenarios catch real defects, and which areas of the application deserve higher priority.

In agentic testing environments, these insights create a continuous improvement loop – making each testing cycle smarter than the last.

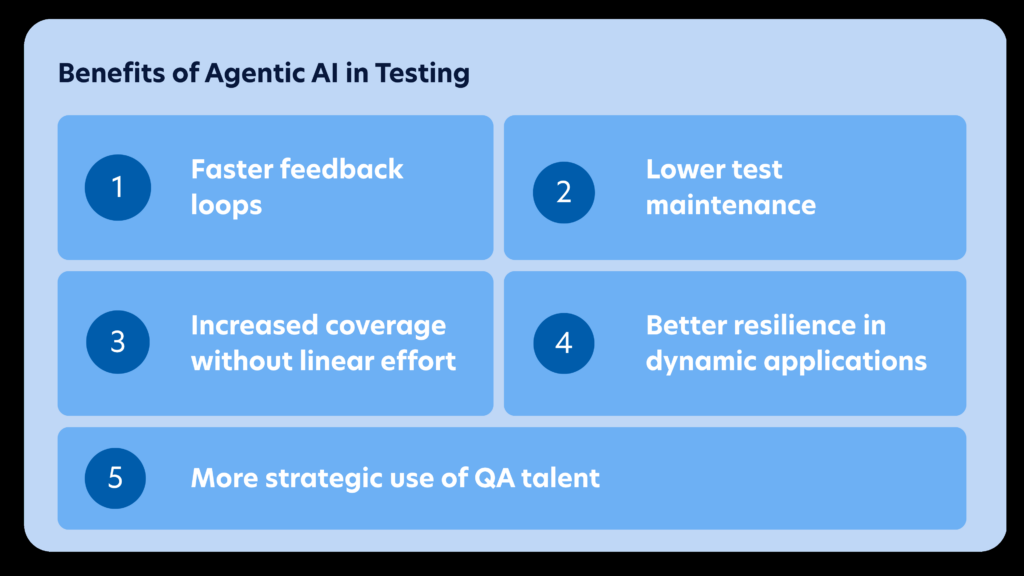

Key Benefits of Agentic AI Testing

Agentic AI Testing delivers measurable value when adopted thoughtfully. The benefits below are common across teams that move beyond proof-of-concept and operationalize agentic capabilities.

Faster feedback loops

Autonomous agents can execute targeted checks quickly, especially when integrated into CI/CD pipelines, enabling teams to detect defects much earlier in the development cycle. Faster feedback helps teams catch defects earlier, reducing rework and improving delivery predictability. Studies have shown that fixing defects after release can cost 15–30 times more than fixing them during development.

Lower test maintenance

Automation maintenance is one of the biggest hidden costs in QA. Self-healing behaviors reduce time spent fixing broken scripts. Instead of debugging locators and updating flows for every UI change, teams can focus on validating business outcomes.

Increased coverage without linear effort

Agentic systems dynamically generate test scenarios based on application behavior, historical defects, and user flows. This allows teams to expand coverage across edge cases and complex workflows without manually writing large numbers of new tests.

Better resilience in dynamic applications

Modern applications change frequently due to continuous deployment and evolving UI frameworks. Agentic agents adapt to such changes by analyzing context and identifying alternative paths during execution. This helps reduce flaky tests – an issue that affects up to 16% of automated test failures.

More strategic use of QA talent

By automating repetitive test creation and maintenance tasks, agentic systems allow QA engineers to focus on higher-value activities such as risk-based testing, security validation, and exploratory testing.

High-Impact Use Cases of Agentic AI Testing for Different Industries

Agentic AI Testing is most effective when applied to industry-specific digital workflows, where complex user journeys, regulatory requirements, and frequent releases demand intelligent testing approaches.

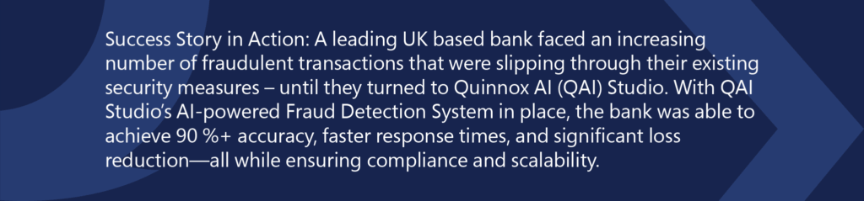

1. Banking and Financial Services

In banking platforms, agentic AI testing can autonomously validate transaction workflows, payment gateways, fraud detection rules, and regulatory compliance scenarios across UI and APIs.

For example, agents can simulate customer journeys like account transfers, loan approvals, and authentication flows to ensure reliability.

2. Retail and E-commerce

Retail applications rely heavily on seamless digital experiences – from product discovery to checkout. Agentic testing agents can continuously test search functionality, cart logic, promotions, pricing rules, and payment integrations across multiple devices. This is particularly valuable during high-traffic events like seasonal sales. Studies show around 49% of e-commerce testing teams already use AI-driven visual and functional testing tools to maintain UI accuracy across frequent releases.

3. Logistics and Supply Chain

In logistics platforms, agentic AI agents can validate workflows such as shipment tracking, warehouse management integrations, route optimization systems, and real-time inventory updates.

For example, agents can simulate end-to-end scenarios from order creation to delivery confirmation across APIs and mobile interfaces.

According to McKinsey & Company, AI adoption in supply chain operations can improve logistics efficiency by 15–20%, making reliable testing of these systems increasingly critical.

4. Environment and Sustainability Platforms

Environmental monitoring systems rely on sensor data ingestion, analytics dashboards, carbon tracking platforms, and regulatory reporting tools. Agentic AI testing can validate data pipelines, anomaly detection algorithms, and reporting workflows across large datasets. As organizations expand sustainability initiatives, reliable software validation becomes critical for ensuring accurate environmental reporting and regulatory compliance.

5. Insurance

Insurance platforms involve complex workflows such as policy issuance, premium calculations, claims processing, and fraud detection integrations. Agentic AI agents can simulate realistic customer journeys – from policy purchase to claim settlement – while validating underwriting rules and regulatory compliance.

Over 80% of insurers are investing in AI-driven technologies, which makes intelligent testing approaches essential for validating increasingly automated systems.

Related Read: Top 30+ AI Agents Use Cases for Business Success

Implementation Roadmap: A Practical and Safe Approach

Adopting agentic AI testing should be deliberate and structured. Organizations that approach implementation incrementally – starting small, establishing governance, and expanding with clear metrics – tend to achieve stronger adoption and long-term value.

Step 1: Start with one critical journey

Choose a workflow with high business value and high maintenance pain – such as login, checkout, quote-to-cash, onboarding, or a key admin function. Define success criteria clearly.

Step 2: Define goals and guardrails

Specify what the agent should validate, what data it may use, which environments are allowed, and when it must escalate to a human. Guardrails are essential for responsible autonomy.

Step 3: Integrate into CI/CD gradually

Begin with nightly execution to collect baseline stability and healing metrics. Then expand to pull-request smoke suites and change-based test selection.

Step 4: Establish observability

Track what changed, how the agent adapted, why it made decisions, and what it learned. Strong logging and audit trails are key for trust and governance.

Step 5: Expand coverage intentionally

Once the first journey is stable, add adjacent flows. Reuse components, build a library of trusted validations, and standardize reporting.

Governance, Risk, and Best Practices

Autonomy in testing must be paired with control. The most common risks include over-trusting generated tests, running agents in sensitive environments without data protections, and allowing uncontrolled self-modification of test logic.

Organizations can mitigate these risks through several best practices:

- Keep a human-in-the-loop for high-risk releases and ambiguous outcomes.

- Require approvals before promoting newly generated tests into production suites.

- Mask sensitive data and limit credential exposure.

- Maintain audit logs of agent actions and changes.

- Create clear escalation paths when agents encounter uncertainty.

With appropriate governance structures in place, agentic testing becomes a reliable accelerator for quality engineering rather than a source of operational risk.

Check out on this insightful article: Navigating AI Governance: The Imperative of Ethical and Responsible AI

KPIs to Measure Success

To evaluate ROI, track metrics that reflect both speed and quality:

- Regression cycle time – reduction in time required for full regression testing per release

- Test maintenance effort – hours spent fixing or updating automation scripts

- Flaky test rate – percentage reduction in unstable or inconsistent tests

- Coverage of critical user journeys – depth and breadth of business-critical scenario validation

- Defect leakage – number of defects escaping into production

- Mean time to triage – speed of diagnosing and understanding test failures

Tracking these metrics provides visibility into ROI and helps organizations scale agentic testing with confidence across larger testing programs.

The Bottom Line: From Testing as a Task to Testing as Software

Software quality is entering a new phase. As applications become more complex and release cycles accelerate, traditional testing models built around manual effort and static automation are no longer sufficient. The next evolution of quality assurance lies in intelligent, autonomous, and continuously learning systems, where AI agents validate applications proactively and ensure that digital experiences remain reliable as software evolves.

This transformation is part of a broader shift in enterprise technology delivery. Organizations are increasingly moving toward the Services-as-Software (SaS) paradigm, where capabilities traditionally delivered through human-driven services are reimagined as platform-based, AI-powered, and outcome-driven systems. In this model, IT services are no longer limited to tools and processes – they become intelligent platforms that continuously deliver measurable business outcomes.

Within this framework, testing itself must evolve. Rather than functioning as a discrete phase in the development lifecycle, quality assurance must become an embedded, always-on capability that operates seamlessly across development, integration, and production environments.

Quinnox brings this vision to life through Application Testing as Software (ATaS), powered by the AI-driven test automation platform. By combining agentic AI, intelligent automation, and analytics-driven insights, ATaS enables organizations to move beyond fragmented testing approaches and toward continuous, autonomous quality validation.

The result is a testing ecosystem that scales with modern digital platforms – accelerating releases, reducing maintenance overhead, and ensuring that applications consistently deliver reliable and high-quality user experiences.

As enterprises continue their shift toward the SaS model, testing will no longer be viewed as a supporting activity. Instead, it will operate as a strategic quality engine – autonomous, scalable, and directly aligned with business outcomes.

Connect with Quinnox experts to explore how ATaS can accelerate intelligent, autonomous testing.

FAQ’s Related to Agentic AI Testing

Agentic AI testing uses autonomous AI agents that can design, execute, adapt, and analyze tests with minimal human intervention. Unlike traditional automation, these agents understand context, learn from past runs, and continuously improve testing coverage across applications.

Traditional automation relies on predefined scripts and manual maintenance, while agentic AI testing uses intelligent agents that can generate test scenarios, self-heal scripts, adapt to application changes, and prioritize risks automatically.

Agentic AI testing can be applied across web, mobile, API, enterprise platforms, and microservices-based applications. It is particularly valuable for systems with frequent releases, complex workflows, and large-scale integrations.

AI agents can generate multiple test variations, explore edge cases, and analyze production data to identify high-risk scenarios. This allows organizations to expand coverage without proportionally increasing manual test creation.

Most organizations begin with a high-impact user journey or regression suite, integrate agentic testing into their CI/CD pipeline, and gradually expand coverage while establishing governance, observability, and performance metrics.