The digital landscape in 2026 has reached a critical inflection point. A mobile-first strategy is no longer a competitive differentiator. It is a baseline requirement for enterprise relevance. Mobile applications are no longer peripheral channels supporting the business. They are the business. They drive revenue, shape brand perception, and increasingly define customer loyalty.

Yet while mobile experiences have become mission-critical, the quality assurance models that underpin them remain structurally misaligned with today’s realities.

Mobile ecosystems are now sprawling, fragmented, and deeply personalized. Enterprises must support thousands of device combinations, rapid operating system releases, hybrid architectures, and continuously evolving user journeys. At the same time, development velocity has accelerated under DevOps and Agile models, compressing release cycles from months to weeks or even days.

According to Gartner’s 2026 IT Spending Forecast, global software spending is expected to grow by 14.7%, driven largely by AI-led automation and intelligent platforms. This growth reflects a deeper truth. Software quality, particularly on mobile, is no longer a technical concern. It is a strategic risk variable.

Traditional QA approaches were never designed for this level of scale, speed, or complexity. Manual testing struggles to keep pace. Script-based automation breaks under constant UI change. And coverage-driven testing models create the illusion of quality without guaranteeing real-world resilience.

The core question for enterprise leaders is not whether mobile quality matters. It is whether existing QA architectures can sustain the velocity and complexity of modern digital ecosystems. AI in mobile testing represents a structural redesign of how quality is engineered – not an incremental tooling upgrade.

What AI in Mobile Testing Really Means

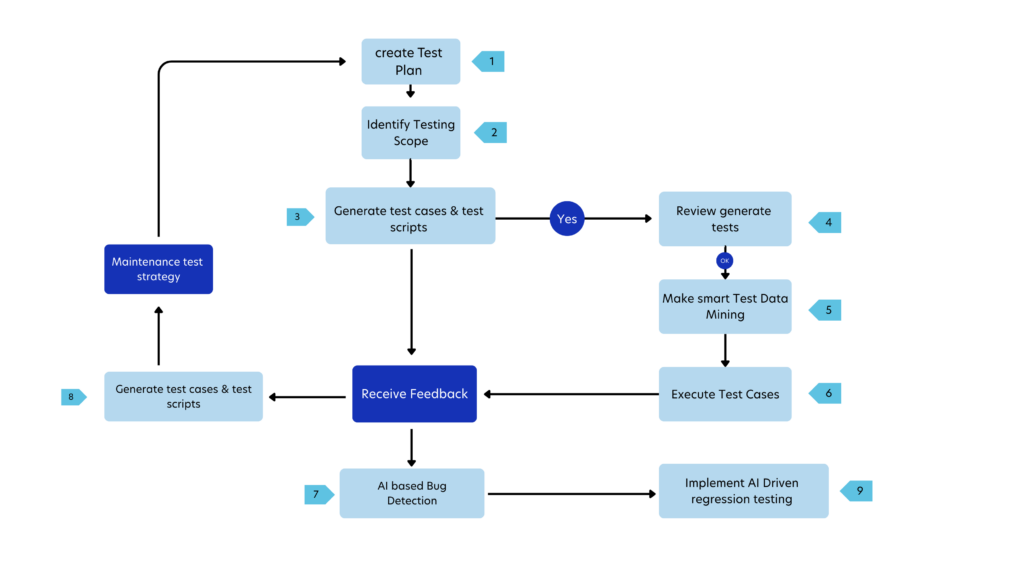

AI in mobile testing refers to the application of machine learning, computer vision, and data-driven intelligence across the quality assurance lifecycle. Unlike traditional automation, which executes predefined scripts, AI introduces adaptability, context awareness, and prediction.

Traditional automation relies on predefined scripts: a tester writes exact steps, the script executes them, and even a minor UI change can cause the test to fail – regardless of whether the underlying functionality still works. This makes automation brittle and maintenance-heavy.

AI-driven testing works differently. It learns patterns from the application, understands relationships between UI elements, analyzes historical defect and execution data, and adapts when small changes occur. Instead of depending solely on static identifiers or fixed paths, AI recognizes context – such as visual position, functional similarity, and past behavior.

The fundamental shift is from rule-based execution to intelligent adaptation. AI does not just run tests; it interprets changes, prioritizes risk, and evolves with the application, making mobile QA more resilient, scalable, and aligned with continuous delivery environments.

If you want a comprehensive overview of how testing frameworks have evolved and why application testing remains critical to digital success, explore our detailed guide on What Is Application Testing?

Why Traditional Mobile Testing Models Fail at Scale

As enterprises push mobile apps into global markets, traditional QA approaches – largely manual testing and rigid scripted automation – struggle to keep pace with real-world complexity. These approaches weren’t designed for the volume of devices, user scenarios, and rapid update cadence demanded today, and that leads to gaps in quality, missed defects, and poor user experience.

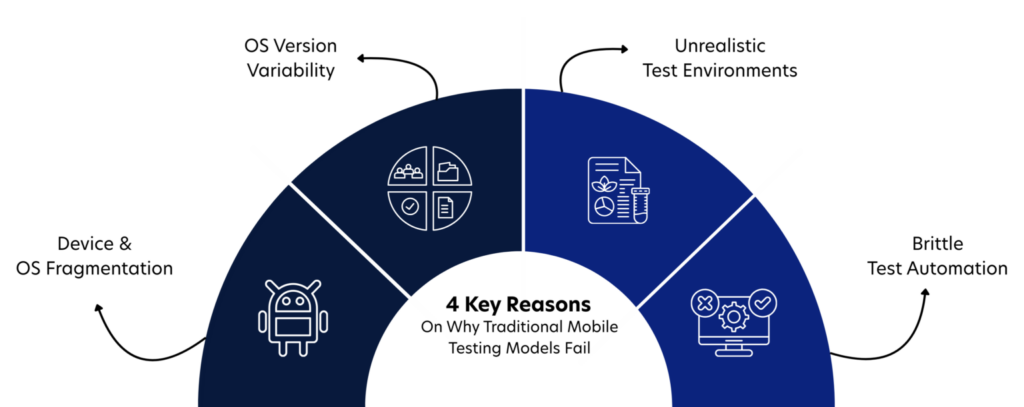

Here are 4 core reasons traditional mobile testing fails at enterprise scale:

1. Device & OS Fragmentation Overwhelms Coverage

The mobile landscape is highly fragmented. Thousands of Android device models, multiple screen sizes, and overlapping OS versions create an enormous testing matrix.

According to IDC, Android dominates the global mobile OS market with approximately 71–73% share as of early 2026, and within that ecosystem, multiple OS versions remain active simultaneously. Testing across all combinations manually is nearly impossible within tight release timelines.

When coverage is incomplete, users experience crashes and inconsistencies – often leading to uninstallations.

Why it fails at scale: Traditional models cannot economically or operationally validate every meaningful device-OS combination.

2. Frequent OS Updates Break Static Automation

Both Android and iOS release regular updates that modify UI behavior, permissions, and APIs. Script-based automation depends on fixed element identifiers and static UI paths. Even minor changes can cause tests to fail unnecessarily. Industry reports highlight that maintaining test scripts is one of the biggest challenges in mobile automation environments.

Why it fails at scale: QA teams spend excessive time repairing broken scripts instead of identifying genuine defects.

3. Real-World Conditions Are Hard to Simulate

Mobile users operate in unpredictable environments – fluctuating networks, low battery, background app interference, and memory constraints. Google indicates that poor performance and crashes significantly increase abandonment rates. Yet traditional test environments often simulate ideal lab conditions rather than real-world variability.

Why it fails at scale: Performance and UX issues surface only after production release, when user impact is already significant.

4. Manual & Rigid Automation Cannot Keep Up with CI/CD

Modern enterprises deploy updates weekly or even daily. Continuous Integration and Continuous Delivery (CI/CD) require rapid regression cycles. Traditional models rely on large, static regression suites that consume significant time and infrastructure resources. Running thousands of test cases for every small code change slows down releases and inflates QA costs.

Why it fails at scale: Testing becomes a bottleneck rather than an accelerator in DevOps-driven environments.

This is where AI-driven mobile application testing services becomes transformative.

Instead of relying on static scripts and brute-force regression, AI introduces intelligence into the QA lifecycle. It analyzes historical defects, understands UI patterns, evaluates code changes, and prioritizes risk-based testing. It adapts automatically to minor UI shifts and focuses validation efforts where they matter most. Rather than expanding QA effort linearly with application complexity, AI enables scalable quality engineering.

And from here, mobile testing evolves – from manual validation to intelligent automation, from reactive bug detection to predictive quality assurance.

Core- Capabilities of AI-Driven Mobile Testing Across Platforms

Modern mobile applications rarely exist in isolation – enterprises typically maintain Android, iOS, and often hybrid/web versions of the same app. Ensuring consistent quality across all these platforms – with different UI behaviors, OS quirks, and performance profiles – is one of the toughest challenges QA teams face.

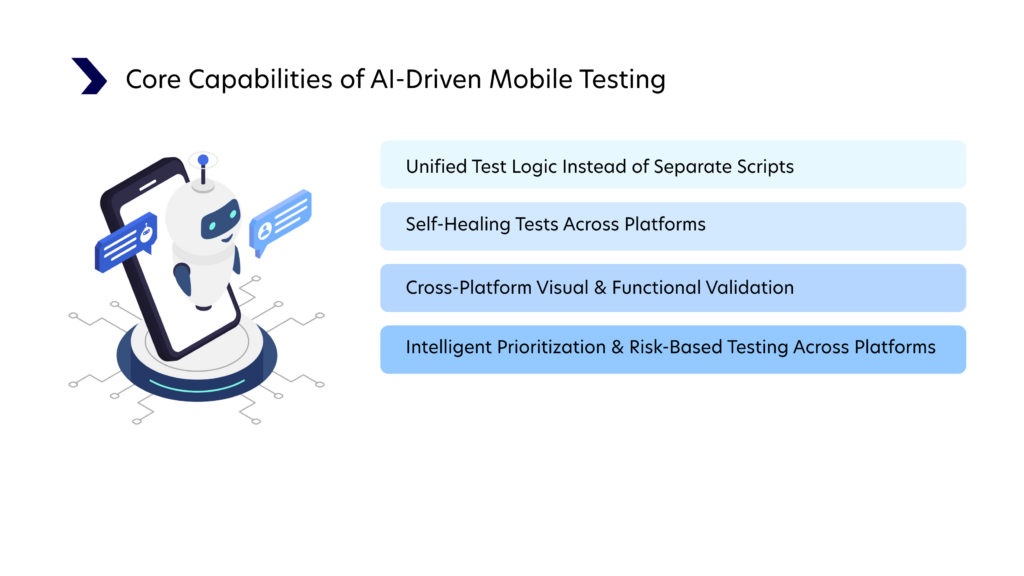

AI drives four major improvements over traditional cross-platform approaches:

1. Unified Test Logic Instead of Separate Scripts

Traditionally, QA teams write:

- One set of tests for Android

- A second for iOS

- A third for hybrid or web variants

Each suite often duplicates logic with slight differences for UI locators, navigation patterns, and gestures – a huge maintenance burden.

AI-powered frameworks (AI-enhanced versions of tools like Qyrus) let you write intent-based tests – for example, “log in, verify dashboard loads” – which can be executed across both Android and iOS without rewriting platform-specific selectors. This is because AI combines visual understanding with contextual recognition of UI elements, rather than relying on brittle static identifiers.

2. Self-Healing Tests Across Platforms

One of the worst drains on QA resources is fixing tests every time a UI changes slightly. Traditional automation breaks and stops, even if the app’s logic hasn’t changed.

AI adds self-healing capabilities – tests automatically adjust to minor UI updates because the system learns element relationships, positions, and naming patterns.

In a cross-platform context, this means one test suite can continue to operate across both Android and iOS even as UI evolves – dramatically reducing script failure rates and maintenance effort. This also improves the reliability of tests executed against nightly builds in CI/CD pipelines.

Example: If a button label changes from “Checkout” to “Buy Now,” AI recognizes equivalent UI intent and adapts, instead of failing the test.

3. Cross-Platform Visual & Functional Validation

AI doesn’t just interact with UI elements – it understands visual context. Tools with computer vision and pattern recognition can spot inconsistencies in layout, alignment, icons, responsiveness, and other UX elements across platforms. In contrast, traditional frameworks often miss these because they only verify underlying code levels or element presence.

This matters because UI inconsistencies – even if trivial in code – can drastically affect user perception and brand experience.

4. Intelligent Prioritization & Risk-Based Testing Across Platforms

Modern AI systems don’t just execute tests – they choose which tests matter most. By analyzing:

- Change impacts (from code commits)

- Historical defect patterns

- Usage analytics

- Platform-specific performance variances

AI can prioritize tests that are most likely to uncover defects. Instead of flat regression suites, cross-platform testing becomes risk-driven. This approach reduces test execution time while maintaining or improving coverage – especially important when delivering frequent releases.

Business Value of AI-Led Mobile Quality

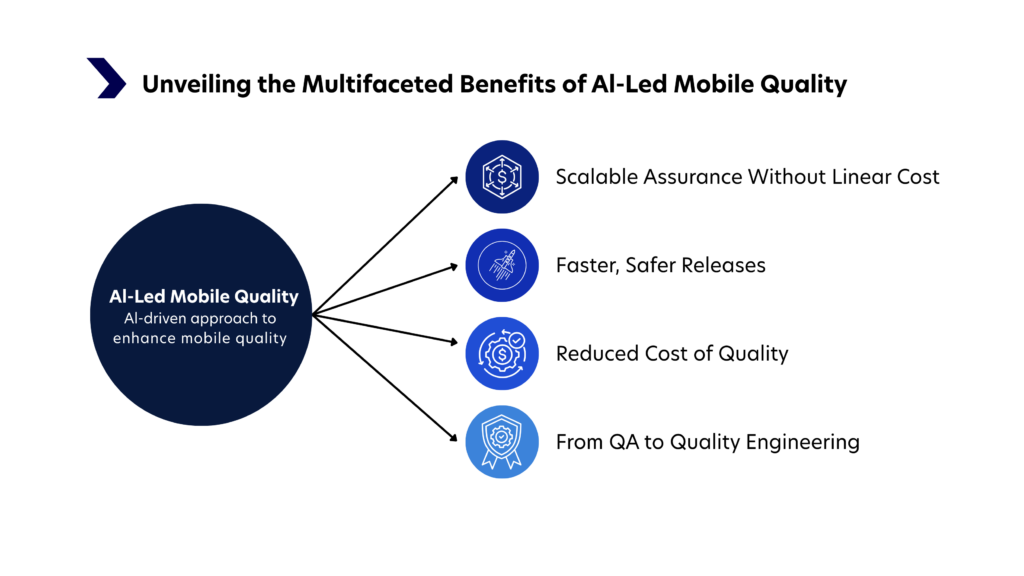

AI in mobile testing is not just a technical upgrade. It changes the economics of quality. When implemented correctly, it transforms QA from a reactive cost center into a strategic growth enabler. Here’s how.

1. Scalable Assurance Without Linear Cost

Traditional mobile QA scales linearly – more features and devices require more testers and effort. AI breaks that pattern by enabling intelligent test selection, parallel execution, and self-healing automation. Enterprises can validate thousands of scenarios across platforms without proportionally increasing headcount. Testing capacity grows with product complexity, but costs remain controlled.

2. Faster, Safer Releases

In CI/CD environments, static regression suites slow delivery. AI analyzes code changes and prioritizes high-risk areas, reducing unnecessary test execution while maintaining coverage. Feedback cycles become shorter, and release confidence improves. Organizations gain speed without sacrificing stability.

3. Reduced Cost of Quality

Defects discovered late are expensive – impacting revenue, reputation, and customer trust. AI identifies risk early, optimizes coverage, and detects performance issues before release. Catching problems earlier in the lifecycle significantly lowers remediation costs and protects business value.

4. From QA to Quality Engineering

With repetitive execution automated, QA teams shift from script maintenance to strategic quality engineering. The focus moves to risk management, experience validation, and resilience. Quality becomes embedded across the lifecycle – not just a final gate before release.

The Quinnox Perspective on Intelligent QA

At Quinnox, we view AI-led mobile quality as a strategic transformation journey, not a tooling decision. Real impact comes from embedding intelligence across the testing lifecycle, from design to release. By integrating predictive analytics, risk-based prioritization, and adaptive automation into delivery pipelines, we help enterprises shift from reactive defect detection to proactive, risk-driven quality assurance.

Our AI-powered test automation capabilities enable organizations to scale coverage, accelerate releases, and strengthen release confidence – without increasing operational overhead. Quality is aligned directly with business outcomes, ensuring speed, scalability, and trust move forward together.

As Bighneswar Parida, Testing Head, Quinnox, states “Enterprise mobile quality is no longer about running more tests. It is about applying intelligence where it matters most. AI enables QA to become a strategic driver of digital trust.”

If you’re ready to engineer quality intelligently and modernize your mobile QA strategy, get in touch with us.

Director, Marketing, Quinnox

FAQs on Defect Management in Software Testing

AI in mobile testing is highly suitable for enterprise-scale applications because it addresses device fragmentation, frequent releases, and complex integrations. By introducing adaptive, data-driven validation, AI enables enterprises to maintain quality at scale without linear increases in cost or time.

AI can automate test generation, self-healing of scripts, visual validation, regression prioritization, and defect trend analysis. These capabilities go beyond execution, enabling intelligent decision-making across the testing lifecycle.

The primary benefits include faster release cycles, broader and more relevant coverage, reduced maintenance effort, improved defect detection accuracy, and lower overall cost of quality across enterprise environments.

AI does not replace manual testing. It augments it by automating repetitive, large-scale tasks and freeing human testers to focus on exploratory testing, usability analysis, and complex scenario validation.

Most enterprises begin seeing ROI within a few release cycles through reduced maintenance effort and faster feedback loops. Long-term ROI emerges through accelerated time-to-market, reduced defect leakage, and improved customer experience.